The CISO asked a reasonable question. What is Claude actually doing when it runs a shell command in our environment, and can anyone reconstruct what happened after the session ends? The developer on the call did not have a good answer. That conversation happens about three months after most Australian Claude Code rollouts, when someone senior finally looks at what the tool can do and realises there is no paper trail.

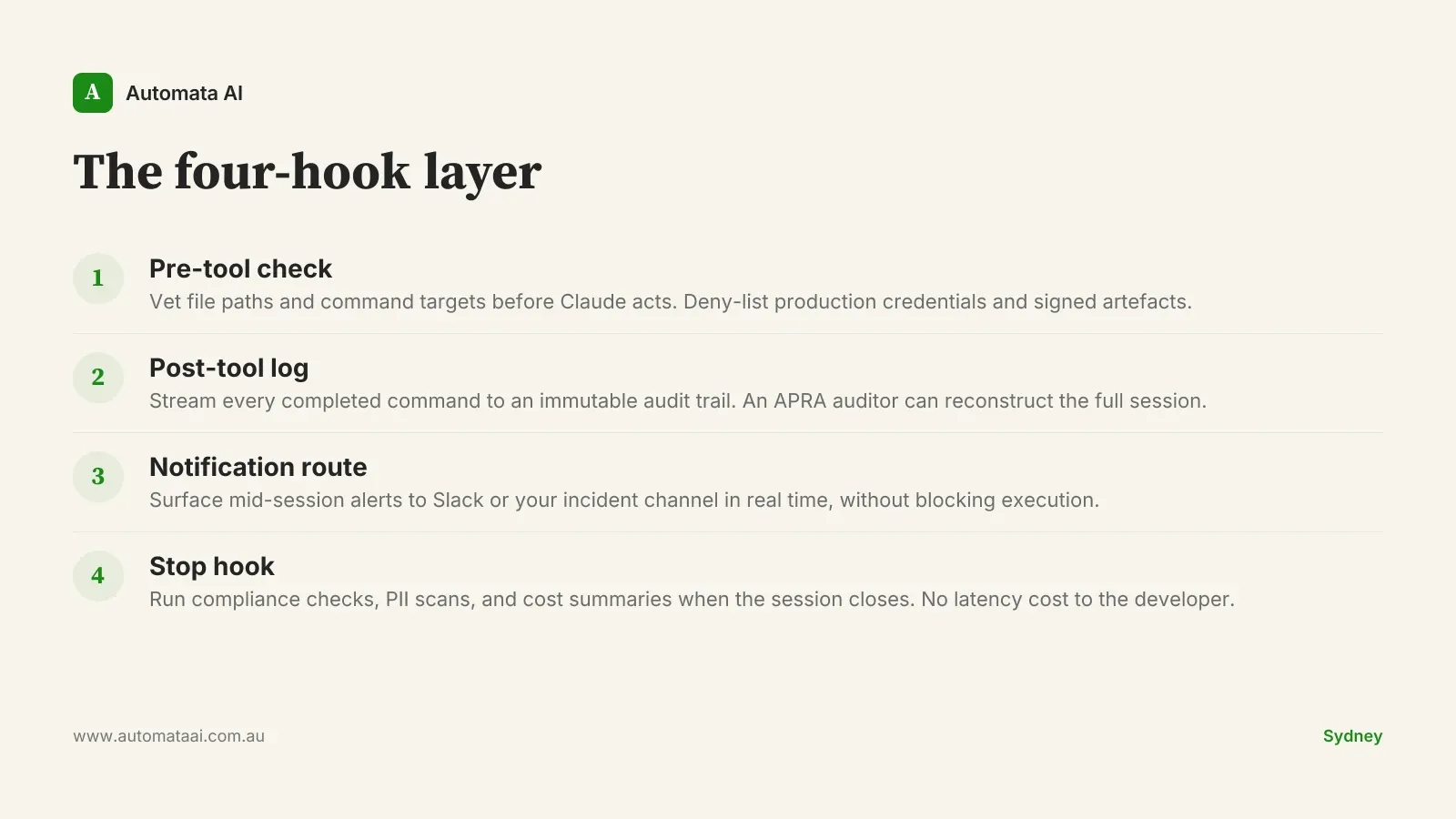

Claude Code hooks are shell commands or scripts that fire automatically at defined points in Claude's execution cycle. Four types: pre-tool, post-tool, notification, and stop. Each one is a programmable checkpoint where your organisation's security and compliance policies can run: before Claude touches a file, after it executes a command, when something surfaces mid-session, or when a session closes. The policies do not live in Claude's configuration. They live in your environment, under your control.

The four-hook layer that covers most Australian teams

Pre-tool hook. Runs before Claude touches a file or executes a command. Use it to vet file paths against a deny list, so Claude cannot edit production credentials, signed release artefacts, or restricted infrastructure-as-code.

Post-tool hook. Runs after every shell command completes. Stream the output to a log file and you have a full, ordered session transcript that an auditor can read.

Notification hook. Fires when Claude surfaces something mid-session. Route it to a Slack channel and engineering managers get real-time visibility without needing a standup.

Stop hook. Runs when a session ends. Post a summary, run a cost report, trigger a compliance check on the transcript. The session is over; there is no latency cost.

For teams in Australian financial services, the post-tool hook is not optional. It is the evidence layer that an APRA CPS 234 review will ask for. The Privacy Act (1988) adds a second requirement: if Claude is processing personal information as part of its tool use, you need a hook that scrubs or flags that data before it lands in any transcript. Both can be addressed with hooks under 50 lines of code.

A hook adds roughly 80 milliseconds per tool call. A typical Claude Code session runs about 40 tool calls. The user-perceived overhead is around three seconds per session. Against four to six hours of engineering work saved, that is a rounding error. The developers who push back on hooks are not objecting to three seconds. They are objecting to hooks that were built badly.

A 30-engineer team running Claude Code without any hook layer is exposing roughly $750,000 of annual engineering output to ungoverned tool use. No session-level access controls, no immutable audit trail, no PII scrubbing. Three days of platform engineering builds the hook layer that closes most of that gap. To map which hooks your environment specifically needs, the AI Readiness Assessment walks through the risk surface with you.

The over-engineering mistake

The pattern we see most often: a 200-line bash script that makes a network call to an internal compliance API before every tool use. The script blocks Claude's execution thread. The API has a p99 latency of 600 milliseconds. Developers start bypassing it, or turning it off entirely, within a week. The security team thinks the hook is running. It is not.

The right shape is a fast local check, a non-blocking async log shipment, and an alert that only fires on a genuine policy violation. If your pre-tool hook cannot complete in under 100 milliseconds on the local machine, it will create enough friction that developers will route around it. Write the fast check first. Ship the async log second. Use a background process or a message queue, not a synchronous HTTP call. Build the alerting third, and only after you know what a genuine violation looks like in your environment, not what you imagined it would look like.

A slow hook is worse than no hook. A hook that developers disable is a false sense of security with real maintenance overhead. If your hook layer is adding visible friction, you have not solved the governance problem. You have buried it. The security team has paperwork saying the hook exists; developers have a tool that runs fast; and nobody has noticed the gap between the two.

When hooks are not the right answer

Hooks make sense when the risk is real and the surface is wide. A three-person team on a greenfield internal tool, with no production credentials in scope and no regulated data in play, probably does not need a hook layer in week one. The setup cost is not zero. The ongoing maintenance cost as Claude versions and hook APIs evolve is not zero either. If the tech lead can spot-check session logs manually and the risk exposure is under $50K a year, that is a reasonable control for now. Build the hook layer when the exposure and the team size justify it.

The surface is too small. If Claude Code is being used by one or two people on low-sensitivity work, manual review costs less than building and maintaining a hook layer.

The risk is not mapped yet. Hooks answer specific questions. If you do not know which risk you are mitigating, you will build the wrong hooks and spend months tuning false positives.

Your team will not leave them running. If developers think the hooks are slow or pointless, they will route around them. That is worse than no hooks. Solve the trust problem before the technical one.

How to ship the first hook in a week

Pick the one risk your security team raised last quarter. Build exactly one hook for it. Ship it inside a week. Measure how often it fires when it should not. That number is the false-positive rate. Keep it below five percent before you build the second hook. Above that rate, developers start treating alerts as noise, and the hook's signal value collapses.

Hooks compound. A team that ships one hook, measures it cleanly, and adds a second six weeks later will have a more reliable layer in six months than a team that attempted all four at once. Our AI Automation Services include a structured hook rollout sequence for exactly this reason. The order matters as much as the hooks themselves.

The compliance case for hooks is easy to make. The latency case is easy to make. The hard part is building a hook layer that developers actually leave running. One that adds governance without adding friction, that fits into the way developers already work rather than sitting perpendicular to it. That is the engineering problem worth getting right. Get it right and the audit story writes itself.