Most CFOs discount vendor productivity claims before the meeting starts. A 14-to-1 return on Claude Code seats sounds like exactly the kind of number that belongs on a vendor slide.

The way to change that is not to defend the number harder. It is to show how it was built.

The inputs that matter

Four variables drive the model. Get any one of them wrong and the whole calculation collapses before the finance team can stress-test it.

Fully loaded engineer cost. In Sydney or Melbourne, budget $250,000 for a senior engineer, $180,000 for mid-level, $130,000 for junior. Blended across a realistic team mix, $160,000-$200,000 per head is the working assumption.

Time saved per engineer per day. Across our Australian mid-market pilots, cautious early adopters recover 35 minutes and fluent users recover 90. A conservative planning assumption is 45 minutes.

Seat cost. At typical Australian usage patterns, Claude Code costs $50-$200 per month per engineer depending on plan and model mix. $100 per month is a reasonable working assumption for initial modelling.

Adoption ramp. A well-structured rollout reaches 30% adoption in month one and 70% by month three. Without an explicit rollout plan, expect a 20% plateau and months of stagnation.

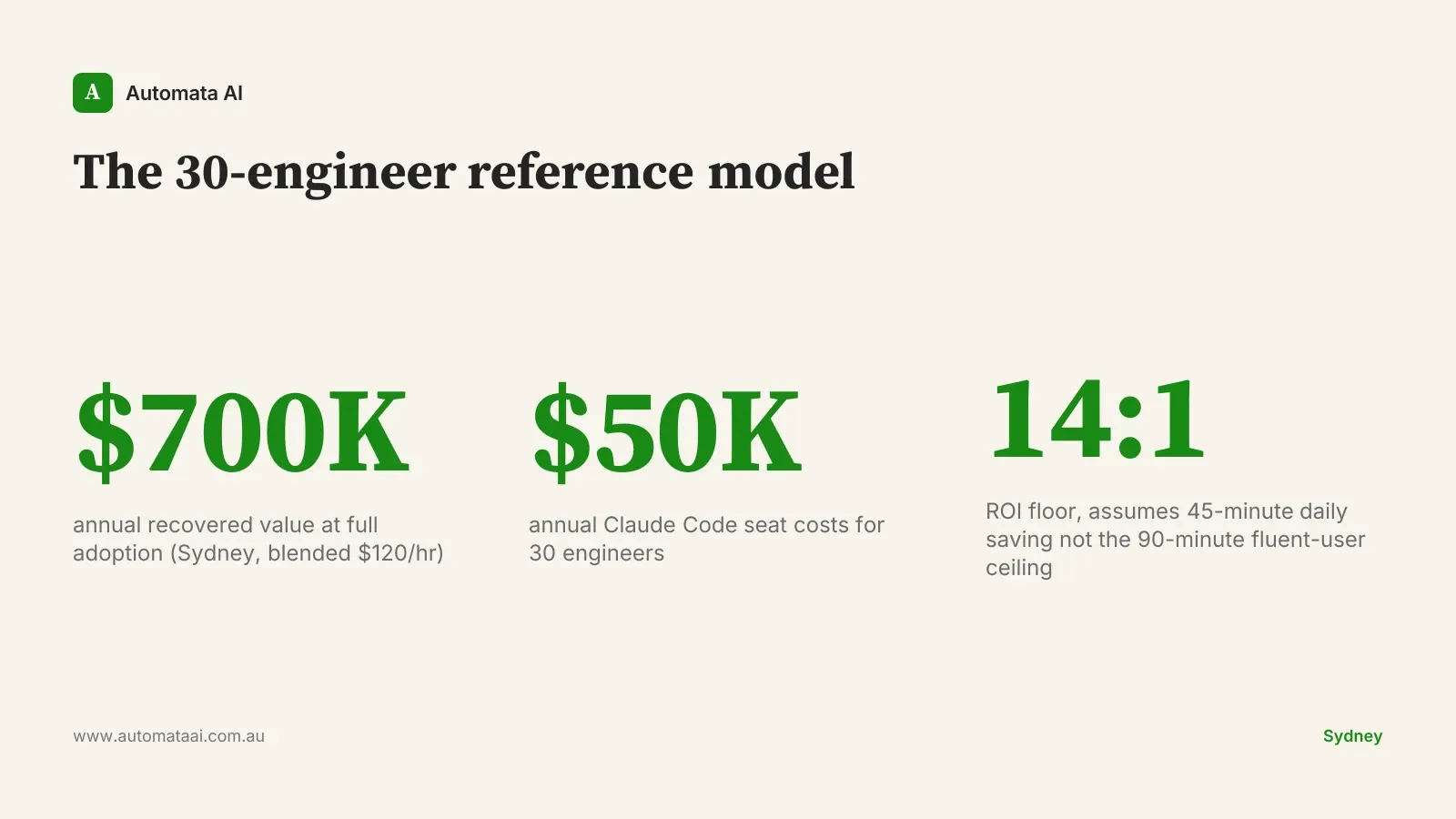

The reference model: 30 engineers in Sydney

Take a 30-engineer team in Sydney with a mix of senior and mid-level engineers. Fully loaded (salary, superannuation, leave, benefits, management overhead), the blended cost sits around $120 per engineer hour.

With Claude Code at full adoption and 45 minutes recovered per engineer per day, the team recovers 22.5 hours per day. At $120 per engineer hour, that is $2,700 in recovered capacity daily, or roughly $700,000 per year.

Claude Code seats for 30 engineers cost around $50,000 per year at the higher end of typical usage. The ratio is 14-to-1. That is the floor. We call it the 30-engineer reference model, and it has become our planning baseline for mid-market Australian teams running a structured rollout.

When the model breaks

Four failure modes reliably collapse this model before the numbers can play out. Each one is avoidable. Each one is common.

Weak rollout. Claude Code shipped without slash commands, hooks, and Skills configured gets used like expensive autocomplete. Teams that skip the first-week setup recover half the value, at best.

Mixed adoption. A five-person pocket converting while the surrounding team stays on old tools creates coordination friction that erases the saving. Adoption needs to cross a threshold; below 60% uptake, the headline number does not hold.

Wrong model selection. Defaulting to the most capable Claude model on every call when a lighter model would do the job inflates the seat cost and narrows the margin. Model selection is part of the rollout, not an afterthought.

No measurement. A team that cannot show usage data has no defence when the finance team asks why the bill exists.

That last failure mode is the one teams skip most often. It is also the one that kills the budget at renewal. If you cannot pull a weekly report showing tasks completed, tokens consumed per engineer, and time saved, you are walking blind into a CFO conversation that is coming regardless.

How to defend the model to your CFO

A CFO who has reviewed productivity software pitches for a decade will not accept a 14-to-1 headline return from a vendor-supplied model. The number sounds constructed. When it comes from a vendor, it usually is.

The defensible move is to show the inputs, not the output. Present the time-saving data from your own pilot, the adoption ramp curve against the plan, the cost trajectory by month, and a sensitivity analysis at half the assumed savings. Even at a 4-to-1 return, the case holds. Plug your own numbers into our ROI Calculator to see where the numbers land for your team.

The point is to walk into that meeting having already discounted the model aggressively. A CFO who sees you have modelled the downside trusts the upside far more than one who sees a single rosy number. Our Claude Code rollout engagements include a structured measurement framework for exactly this purpose.

How to defend the case to a sceptical board

Boards that have watched AI vendor presentations for five years discount productivity claims by default. The tell is a specific number with no provenance. A claim that 'we recovered 12,000 hours' sourced from internal time logs survives scrutiny. The same claim sourced from a vendor brochure does not.

The first discipline is anchoring every number to a measured baseline from your own operations. That means running the pilot with logging on, capturing the before-state as carefully as the after-state, and presenting the delta with the methodology visible.

The second discipline is stress-testing in public. Run the case at 50% of assumed savings and 150% of assumed cost. If the model holds at that haircut, the board has a position they can defend. At a 14-to-1 starting point, it should. If you haven't established a measured baseline yet, an AI Readiness Assessment is the right starting point before the board conversation.

The third discipline is a structured exit clause. The contract should permit a clean exit at month 12 if recovered value falls below 60% of the model. That clause is rarely invoked. It is always reassuring. A board that knows the downside is bounded approves faster than one that suspects an open-ended commitment.

Build the model before you need the budget conversation. The businesses that do this well are not the ones with the most tools. They are the ones with the clearest numbers.