The procurement team has been asking the same question for six months. Where does the inference actually happen? Not 'can we use Claude'. That decision was made in the pilot. The harder question is whether a Sydney government agency can point to an IRAP-assessed region, in writing, when the audit comes.

For most regulated Australian buyers, this is where AI strategy stalls. Not at capability. At jurisdiction.

The four deployment paths, ranked by sovereign fit

Claude is available through four routes. Only two of them are appropriate for regulated Australian buyers, and the difference matters more than any underlying model capability question.

Anthropic hosted API. Inference happens outside Australia. No data residency guarantee. Appropriate for non-sensitive, public-facing workloads only. Not government, not APRA-regulated data.

AWS Bedrock, Sydney region. Inference in ap-southeast-2, an IRAP-assessed region. AWS manages key custody under their Australian entity. The most common regulated deployment path.

Google Cloud Vertex AI, Sydney region. Similar data residency profile to Bedrock. A strong choice for organisations already committed to GCP or pursuing a documented multi-cloud approach.

Private deployment. Not available for Claude. Anthropic does not offer model weights for self-hosting. The hyperscaler-region model is the only sovereign path currently available.

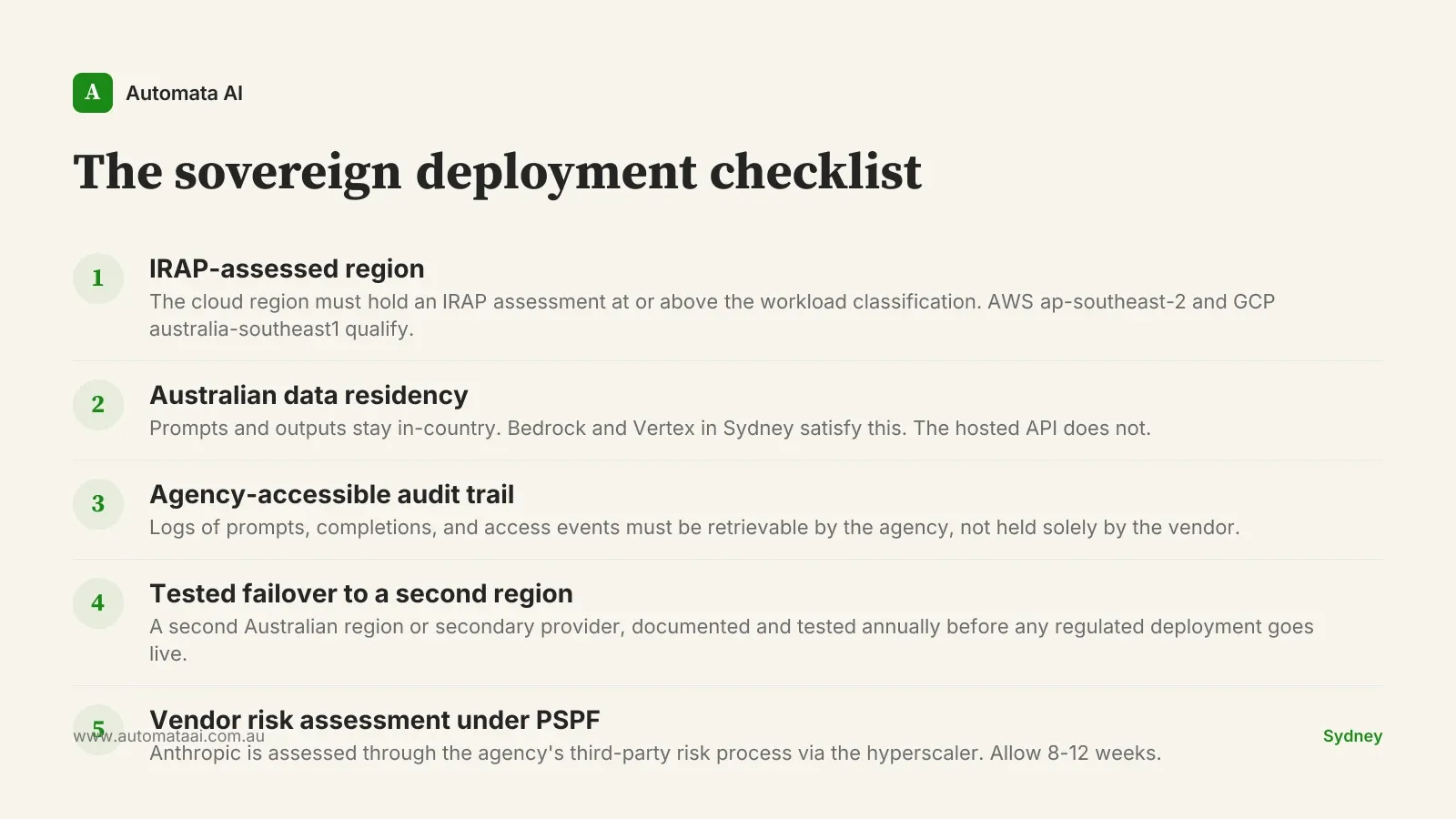

What government buyers must prove above OFFICIAL

Above OFFICIAL sensitivity, any agency deploying Claude must satisfy five requirements under the PSPF and most agency-specific security frameworks. This checklist applies whether the workload is a policy drafting assistant or a document classification pipeline. If you are not sure where your deployment sits, start with an AI Readiness Assessment before scoping the architecture.

IRAP-assessed region. The cloud region must hold an IRAP assessment at or above the workload's classification. AWS ap-southeast-2 and GCP australia-southeast1 both qualify.

Australian data residency. Prompts and outputs must stay in-country. Bedrock and Vertex in Sydney satisfy this. The hosted API does not, and is not appropriate for government use.

Agency-accessible audit trail. Logs of all prompts, completions, and access events must be available to the agency, not held solely by the vendor.

Tested failover. A second Australian region or secondary provider, documented in the architecture and tested annually. For AWS, ap-southeast-2 to ap-southeast-4 (Melbourne) is the standard path.

Vendor risk assessment under PSPF. Anthropic is assessed through the agency's third-party risk process via the hyperscaler. Allow 8-12 weeks for a first engagement.

Bedrock and Vertex satisfy all five for most agency workloads at or below PROTECTED. The hosted API satisfies none of them.

What APRA-regulated enterprises require

For banks, insurers, and superannuation funds, the framework is different but the direction is the same. APRA CPS 230 requires material service providers to be subject to a vendor risk assessment, and AI inference through Claude counts as a material service for most production deployments. The Privacy Act (1988) and Australian Privacy Principles add a further constraint: personal data processed by AI systems must not leave the Australian jurisdiction without consent or a recognised legal exception. The financial services AI deployment guide covers the CPS 230 evidence pattern in more detail.

Multi-provider redundancy looks different in this context. The focus is less on PSPF compliance and more on operational resilience: the ability to shift workload to a second provider without a rebuild. An enterprise running Bedrock as primary with Vertex as failover has a cleaner story for internal audit than one running a single-provider deployment.

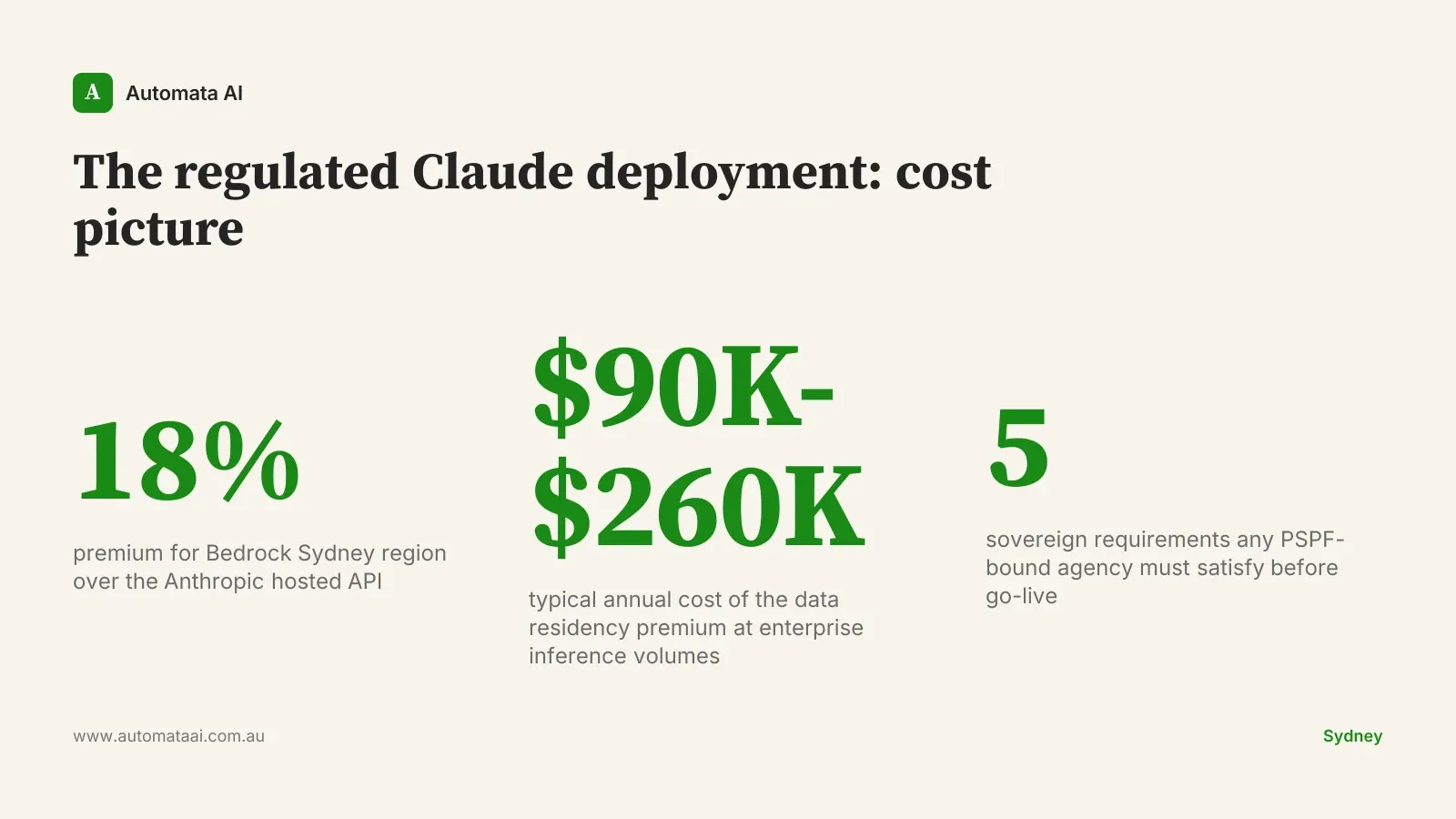

The cost of the data residency commitment

A regulated deployment through Bedrock with Sydney region runs approximately 18 percent above the Anthropic hosted API price. At typical Australian enterprise inference volumes, that gap represents $90,000 to $260,000 annually. For a regulated buyer, that number is not a cost problem. It is the price of the deal closing. Model your specific volumes in the ROI Calculator to see where the break-even sits for your workload.

The better frame is optionality value. A buyer running Bedrock primary and Vertex secondary can absorb pricing changes, model upgrades, and provider incidents without rebuilding the integration layer. That flexibility does not show up in year-one numbers. It shows up in years two through four, when the AI landscape has moved and organisations without a clean multi-provider architecture are paying rebuild costs instead of capture costs.

When the hyperscaler path is the wrong move

The Bedrock and Vertex paths are not the answer for every Claude deployment. Three situations where they are the wrong call:

The workload is genuinely non-sensitive. A public FAQ bot, a marketing copy generator, an internal assistant for non-sensitive HR policy. The hosted API is simpler and cheaper. No sovereignty argument applies to data that was already public.

Inference volumes are sub-threshold. If your annual Claude cost through the hosted API would be under $20,000, the 18 percent premium adds roughly $3,600 per year. At that scale, the overhead of hyperscaler procurement, IRAP review, and vendor risk assessment outweighs the benefit.

Your cloud strategy is mid-migration. Bedrock requires AWS. Vertex requires GCP. Locking AI inference to one hyperscaler before the broader cloud strategy is resolved creates a dependency that is expensive to unwind later.

The next twelve months

Three signals worth watching. Anthropic pricing: model generations are moving fast, and pricing shifts on Sonnet or Haiku reset the cost model for every workload currently in scope. Australian regulatory guidance: the OAIC and ASD are both updating their AI frameworks, and agency-specific positions are moving faster than procurement timelines. Hyperscaler region capability: the Melbourne regions for both AWS and GCP are expanding their AI service footprint, which changes the failover architecture story.

Organisations that build their Claude deployment around the hyperscaler they are already committed to, with a vendor risk assessment in hand and a tested failover plan documented before go-live, are positioned to absorb these shifts without a rebuild. The sovereignty question is not a blocker. It is a design constraint — and design constraints answered early separate deployments that scale from deployments that stall. Our AI Automation Services team structures sovereign Claude deployments for regulated buyers from initial architecture through to PSPF sign-off.