The Privacy Act review landed on week three of the pilot. A Sydney specialist clinic had a working Claude integration for referral letters — the draft quality was accurate and the clinicians liked it. Then the legal team read the data flow diagram and the deployment stopped. Eight weeks of review followed: the Privacy Act (1988), the Healthcare Identifiers Act, and the NSW Health Records and Information Privacy Act. By the time the deployment resumed, two of the three clinical champions had moved on to other priorities.

That's not a model failure. That's a regulatory surface that most AI implementation partners aren't set up to navigate.

The regulatory surface is the actual work

Australian healthcare sits under more privacy law than almost any other sector. The Privacy Act (1988) and its Australian Privacy Principles govern identifiable health data across most providers. The Healthcare Identifiers Act adds obligations around HI numbers in electronic records. State-level legislation adds further requirements that vary by jurisdiction: the Health Records Act in Victoria, the Health Records and Information Privacy Act in NSW, and equivalents in other states. AHPRA professional standards govern what registered practitioners can rely on and document. Professional indemnity insurers are now asking direct questions about AI-generated clinical content before renewing policies. The full picture of what this means for AI deployment is outlined in our Australian healthcare AI guide.

None of this is insurmountable. All of it needs to be mapped before the first real patient record enters the system.

The output of a proper regulatory assessment isn't a blocker. It's a deployment shape: one that runs inside the provider's accredited cloud boundary, with data handling that the Privacy Impact Assessment can sign off on.

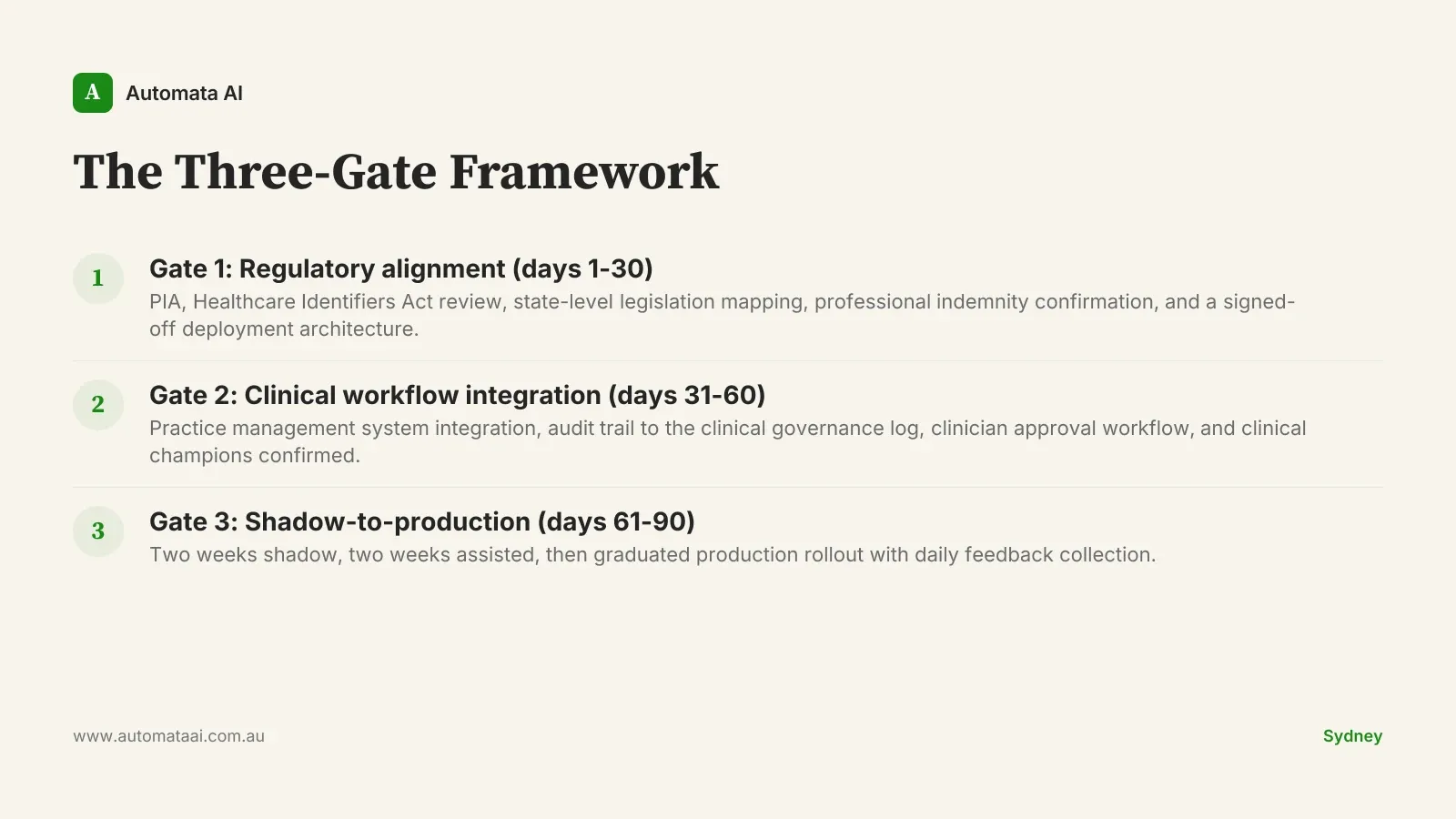

The Three-Gate Framework

A 90-day Claude pilot-to-production for a healthcare workstream has three gates, not three phases. Each gate has a binary outcome: pass and proceed, or hold and remediate. Skipping a gate doesn't save time. It creates the conditions for a legal hold six months later.

Gate 1: Regulatory alignment, days 1 to 30

Privacy Impact Assessment, Healthcare Identifiers Act review, state-level legislation mapping, professional indemnity confirmation, and a deployment architecture that legal can sign off on. The PIA alone takes three to four weeks for a mid-sized provider. Do not compress it.

The deliverable at Gate 1 is a document, not a memo. Your privacy officer, insurer, and clinical governance committee have all reviewed the deployment shape before Gate 2 begins. If you're not certain which obligations apply to your practice, the AI Readiness Assessment is a useful starting point.

Gate 2: Clinical workflow integration, days 31 to 60

Practice management system integration, an audit trail that writes to the existing clinical governance log, and a clinician approval workflow for any AI-drafted content. Most Australian practice management systems have documented APIs. The hard part is not the integration. It's mapping the clinical workflow accurately before writing a line of code.

This is also where the clinical champions are confirmed. If two or more senior clinicians are not invested in the outcome by the end of Gate 2, Gate 3 will fail. That's not a prediction. It's a pattern.

Gate 3: Shadow-to-production, days 61 to 90

Two weeks of shadow mode: Claude generates drafts that no clinician sees yet. Two weeks of assisted mode: clinicians see the drafts and accept or reject without time pressure. Then a graduated production rollout starting with the highest-confidence workstream.

Clinician training happens before week seven — not at week nine when the deployment has already calcified into informal habits. The feedback loop runs daily in the first assisted week.

The clinical governance layer

Every Claude action has a citation back to source data. Every clinical decision remains with the clinician. The audit trail covers prompt, draft, clinician edit, and final sign-off. These are the same four fields that clinical incident review processes already ask for. Clinical governance committees that have reviewed this layer typically describe it as more auditable than the pre-Claude documentation flow. That's often the opposite of the objection they start with.

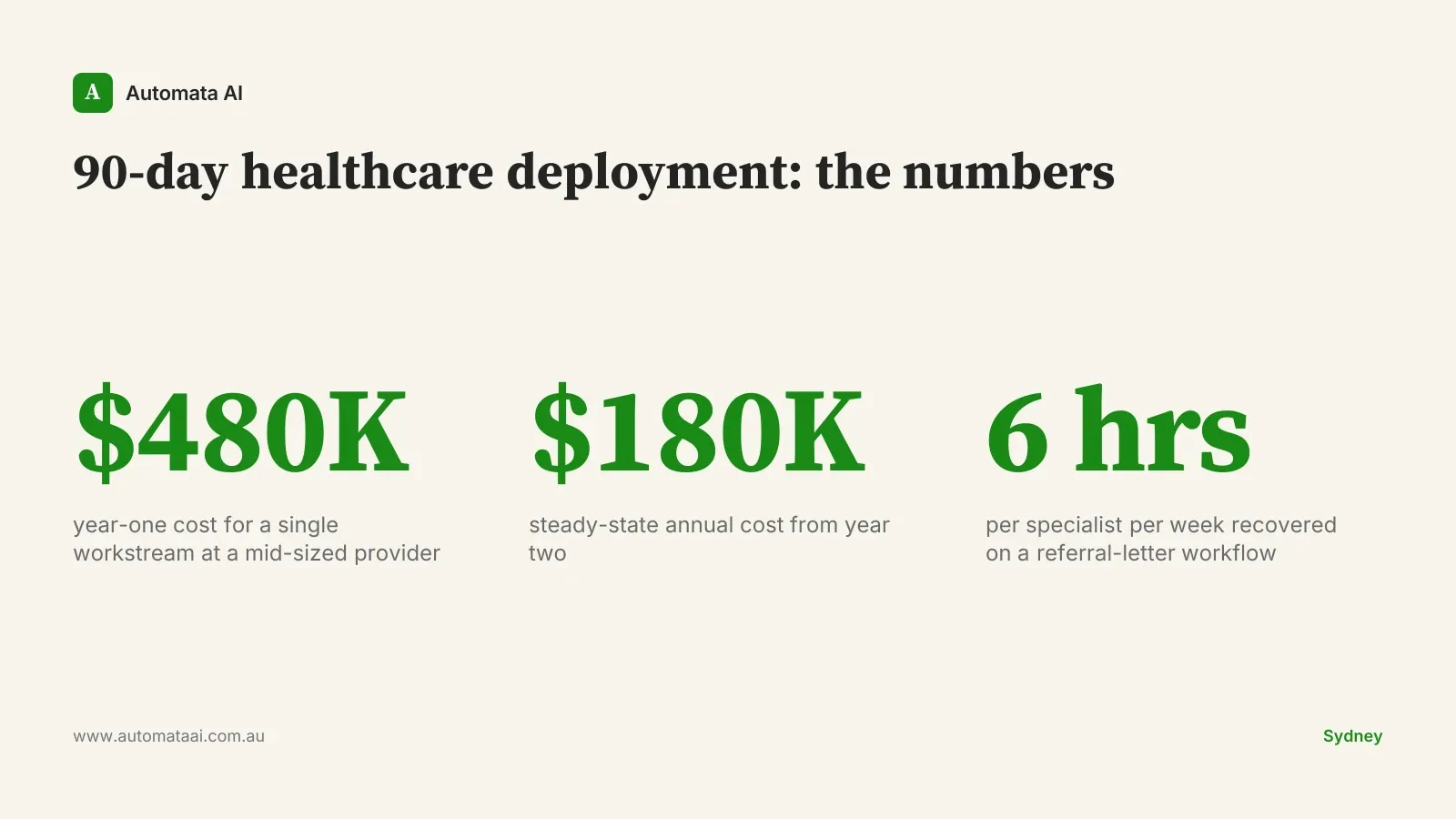

A Sydney specialist clinic ran the Three-Gate Framework on a referral-letter workflow and recovered around six hours per specialist per week. At $200 per hour fully loaded for specialist time, that's approximately $62,000 per specialist per year. A practice with four specialists returns the steady-state annual cost from the time saving alone. The first three months were almost entirely about trust. The technology worked from week two.

What actually kills 90-day plans

These plans have a predictable failure pattern. The cause is almost never the model.

Treating clinicians as users, not co-designers. Clinicians who were not involved in workflow design will not adopt at scale. The clinical champion is a co-author of the deployment, not a sign-off.

Underestimating the privacy work. The Privacy Act layer alone is three to four weeks for a mid-sized provider. Compress it and the PIA will be revisited post-deployment, which is a worse position.

Skipping clinical governance integration. AI-drafted content that doesn't flow into the existing quality and incident processes from day one creates a parallel documentation stream. Clinical governance committees will correctly flag it.

When this plan is the wrong plan

Not every clinical workstream is right for a 90-day cycle. Skip this plan if the process touches emergency or critical-care decisions where human override speed is the primary constraint, or if the practice management system has no documented API and the vendor is unresponsive. Skip it if the clinical team is mid-restructure and two or more senior clinicians are leaving in the next 90 days.

The other disqualifier: volume. If the process runs fewer than 20 interactions per day and the expected time saving is under two hours per clinician per week, the year-one ROI won't hold at realistic implementation costs. Finding a higher-volume workstream is faster than forcing the economics on a low-volume one.

The investment and the signals to watch

Year one for a single workstream at a mid-sized Australian healthcare provider runs around $480,000. Steady-state cost from year two is approximately $180,000 annually. The engagement shape that covers the full three-gate cycle, from regulatory alignment through graduated production, sits within the Transform tier ($150,000 to $300,000), with the remainder in integration, infrastructure, and governance advisory. A provider with a clean, audit-ready Claude deployment can absorb model upgrades, onboard new workstreams, and shift workload between systems without a rebuild. That strategic optionality adds an estimated $80,000 to $200,000 per year in value. Harder to calculate than the first-year time saving, but more durable.

Three signals to watch over the following 12 months: Anthropic pricing changes that reset the cost model, capability upgrades that make new workstreams viable, and AHPRA or privacy regulatory guidance that clarifies or constrains deployment options. Our AI automation services are structured to absorb those shifts without requiring a full rebuild of the deployment architecture.

The practices that get this right over the next four years are the ones that ran the regulatory work seriously in month one. Not as a compliance box-tick. As the foundation the rest of the deployment sits on.