The customer raised the ticket on a Tuesday. The model response had drifted. Not wrong exactly. Just different from what they had seen the previous week on the same input, with the same prompt, against the same data. Subtle enough that they almost let it go. Not subtle enough that they didn't escalate.

Your team spent twelve days investigating it. The model was fine. The prompts hadn't changed. The integration was intact. The issue was infrastructure noise in your Claude production environment, and without monitoring built to catch it, twelve days was the only way out.

What infrastructure noise actually is

Claude inference doesn't run on a single stable machine. It runs on a fleet, distributed across data centres, hardware generations, and deployment versions. Different requests land on different hardware generations, different deployment versions during gradual rollouts, and different batching configurations under varying load. The model weights are the same. The serving infrastructure underneath isn't always. Most teams never model this as a variable.

Anthropic has been open about this. The A100s and H100s that make up inference infrastructure produce subtly different floating-point results from identical model weights on identical inputs. It's not a bug. It's physics. And it has practical consequences for any team whose quality gates assume a stable, deterministic inference target.

Three sources that matter in production

1. Hardware-generation variance

The same Claude model run on different GPU generations can return slightly different outputs on the same prompt. For short generations, a quick classification or a single-sentence summary, the difference is usually negligible. For long-form document analysis and multi-step reasoning tasks, which are common in Australian professional services and financial services workflows, the variance surfaces as inconsistent formatting, changed conclusions, or altered confidence signals.

For Sydney teams running nightly eval suites, this shows up as overnight failures that pass on re-run. A 2-5% flap rate that nobody can reproduce in isolation is a sign worth investigating. Hardware variance is often the culprit.

2. Load-induced variance

Under high API concurrency, request batching and GPU scheduling decisions shift. This is expected behaviour. Anthropic's serving infrastructure is built for throughput at scale, which means output consistency is not guaranteed across all load conditions. For most use cases this is invisible. Two slightly different phrasings of the same answer both serve the user. For compliance clause extraction, legal document review, or APRA CPS 230 reporting workflows where word-level differences carry regulatory weight, this is not a trivial gap.

3. Deployment-version variance

Gradual rollouts mean that at any given moment, some requests hit version N and some hit version N+1. The April 2025 Anthropic incident was an extreme case of this pattern. Smaller windows happen routinely as standard engineering practice. Teams treating the model name as their only version variable are missing the full deployment surface.

When this matters and when it doesn't

Not every production Claude deployment needs to care about this. If you're running a customer-facing chat assistant where the criterion is 'was the response helpful', small output variance is irrelevant. The customer doesn't see the delta between two plausible responses. The problem space doesn't warrant the overhead.

Deterministic-adjacent workflows. Compliance checklists, contract clause extraction, regulatory report generation. Anything where word choice has downstream consequences.

Eval-driven development. Teams using automated eval suites as a quality gate before deploying changes.

Audit-trail requirements. APRA-regulated entities under CPS 230, where decision traceability is a compliance requirement, not a preference.

If your use case isn't on that list, this post probably isn't for you. Ship your product.

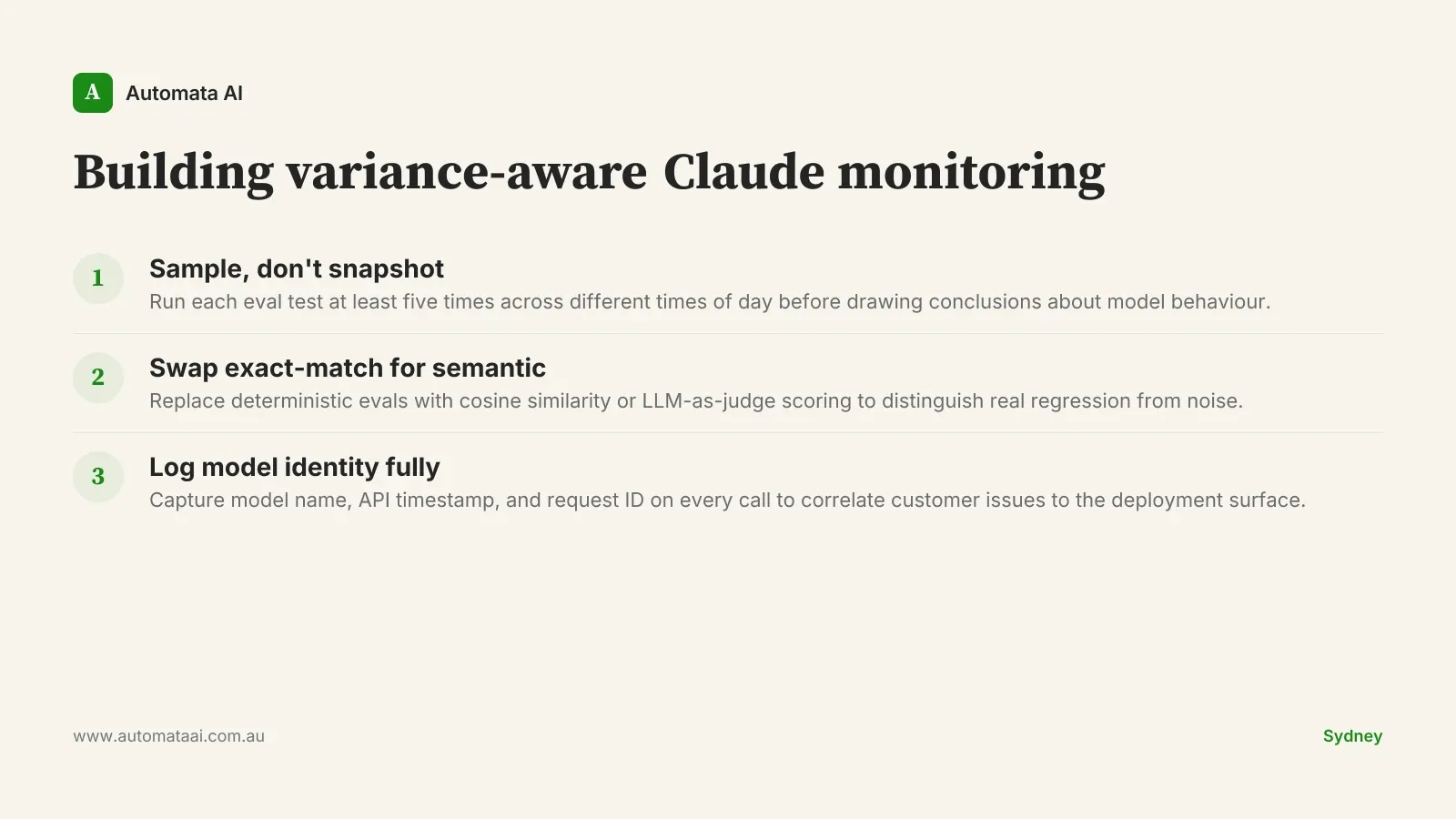

Three adjustments worth making

1. Treat single-request results as samples

When evaluating Claude's behaviour in production, run each test case a minimum of five times, spread across different times of day. One result from one request is a sample from a distribution, not a measurement of model quality. This matters most before deciding the model has regressed and raising a vendor escalation. A single failing response is evidence. Five failing responses across different load conditions is a pattern worth acting on. The distinction between the two has cost teams weeks they didn't have.

2. Build evals that tolerate variance

Exact-match deterministic evals will flag infrastructure noise as model regression. You end up chasing phantom regressions instead of real ones. Replace at least three of your exact-match checks with semantic similarity scoring, cosine distance against an embedding model, or an LLM-as-judge approach with a rubric. The engineering investment is typically $15,000-$30,000 for a mid-market team, depending on your eval suite's size. The payback is the first time it correctly distinguishes noise from a real model change.

3. Log model identity on every request

Model name alone is insufficient for debugging production issues. Log the model name, the API-reported request timestamp, and your internal request ID on every call. When a customer reports an issue, you need to correlate their experience to the deployment surface that served them. Without this, you're reconstructing events from memory and anecdote, trying to narrow down a multi-day investigation window. With it, a thirty-minute investigation replaces twelve days.

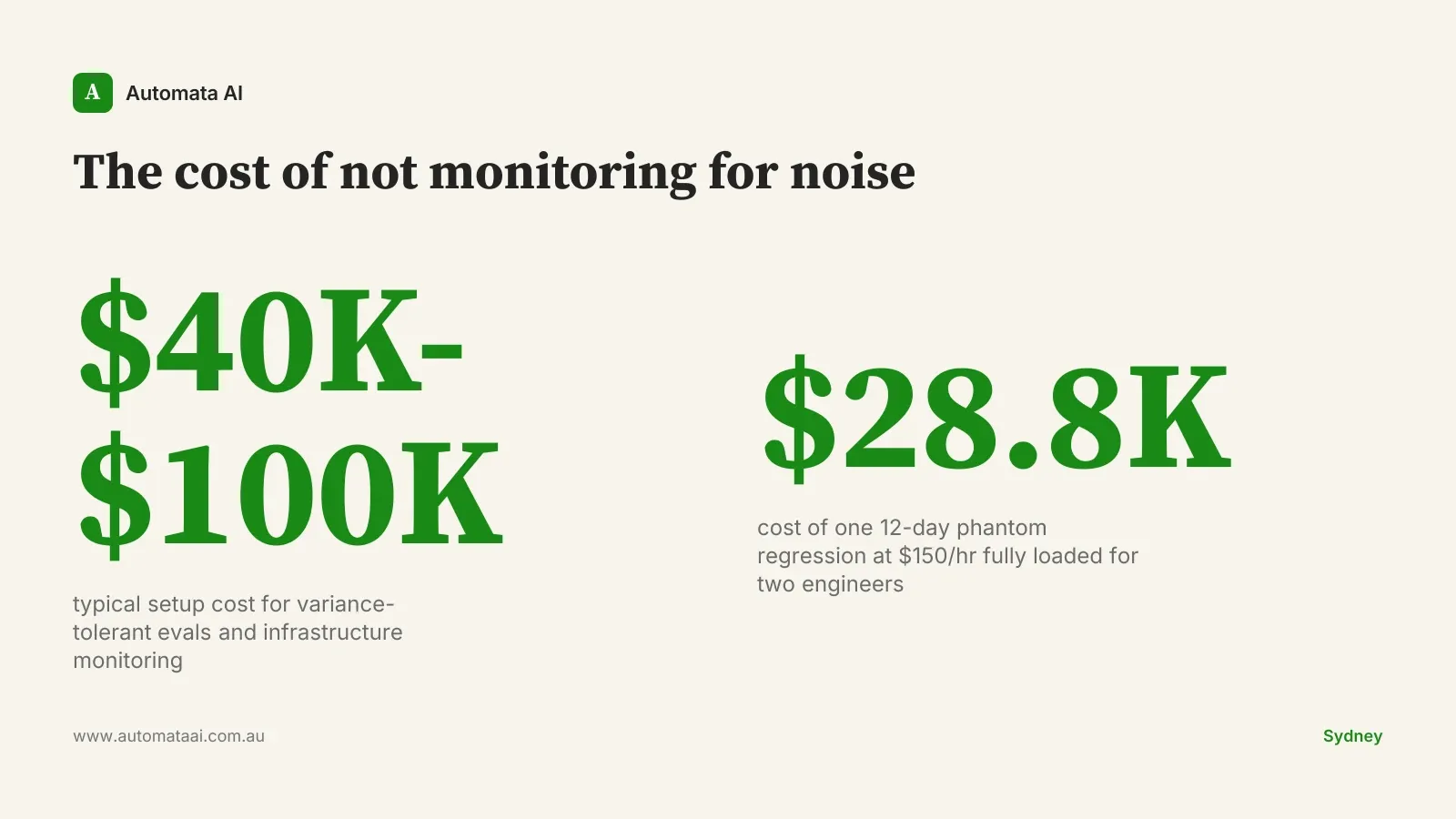

The cost of building this posture

For an Australian enterprise running production Claude across compliance, document review, or professional services workflows, getting to this posture costs $40,000-$100,000 in initial setup: engineering time, tooling, and the first round of semantic eval rewrites. That is a real number, and it is worth being direct about it rather than burying it in a generic 'reach out to discuss' frame.

The payback framing: a twelve-day investigation by two engineers at $150 per hour fully loaded costs approximately $28,800 in people time, before opportunity cost or the CX impact of the unresolved customer issue. Build the posture once and you confirm infrastructure noise in thirty minutes, not twelve days. Do that twice and the investment has covered itself.

Start with the logging. It's a half-day change with diagnostic value that compounds across every future incident. The eval rewrites can follow when you have the sprint capacity. The goal isn't perfect observability on day one. It's having enough signal to stop spending twelve days on phantom regressions.