It is 8:30am on a Tuesday. A consultant at a mid-sized Sydney recruitment agency has 187 unread CVs sitting in her inbox, a job description to write before the 10am client call, and two reference calls from yesterday still not typed up. None of that is recruitment work. All of it is writing and administration.

This is what the industry consistently flags as the four-hour problem. A senior consultant billing 35 hours of placement work per week spends roughly 12 of those hours on writing tasks: JDs, screening notes, candidate submissions, and reference summaries. At a fully loaded cost of around $90 per hour, that is more than $56,000 of recoverable capacity per consultant per year. For an agency with five consultants, that figure crosses $280,000 before accounting for the quality improvements that come from consistent output. Our ROI Calculator can model this against your specific billing rate and headcount.

The breakdown is roughly what you would expect. JD writing takes 30 to 45 minutes per role. Screening 100 CVs takes two to three hours at full attention. A candidate submission is 20 to 30 minutes. A reference-call writeup, if the consultant does it at all, is another 15 to 20 minutes. Four discrete writing tasks, each with a defined input and a standard output. Each a strong fit for automation.

Claude Skills are the most direct way to recover that capacity.

What a Claude Skill actually is

A Claude Skill is a pre-configured AI assistant with a defined role, a fixed set of inputs, and a constrained output format. Unlike a general AI chat interface, a Skill knows exactly what it is for. The JD-drafting Skill knows the agency's voice guide, three to five exemplar JDs in the right tone, the discrimination-safe language patterns required under the Fair Work Act 2009, and the list of phrases the agency has flagged as off-brand or risky. It does not need new instructions each time. You give it the intake notes. It gives you a draft.

Most recruitment agencies are not technology teams. A Skill can be configured and deployed in four to six weeks without touching the agency's existing CRM or applicant tracking system. The inputs are reference documents: voice guides, exemplar JDs, calibration notes on what makes a strong candidate in a given discipline. The technical complexity is low. The value comes from the clarity of the agency's own standards.

For agencies operating in the professional services sector, four processes account for the bulk of that 12-hour writing load. Each has a clearly defined set of inputs and produces a predictable output format. That shape is exactly where Skills operate best.

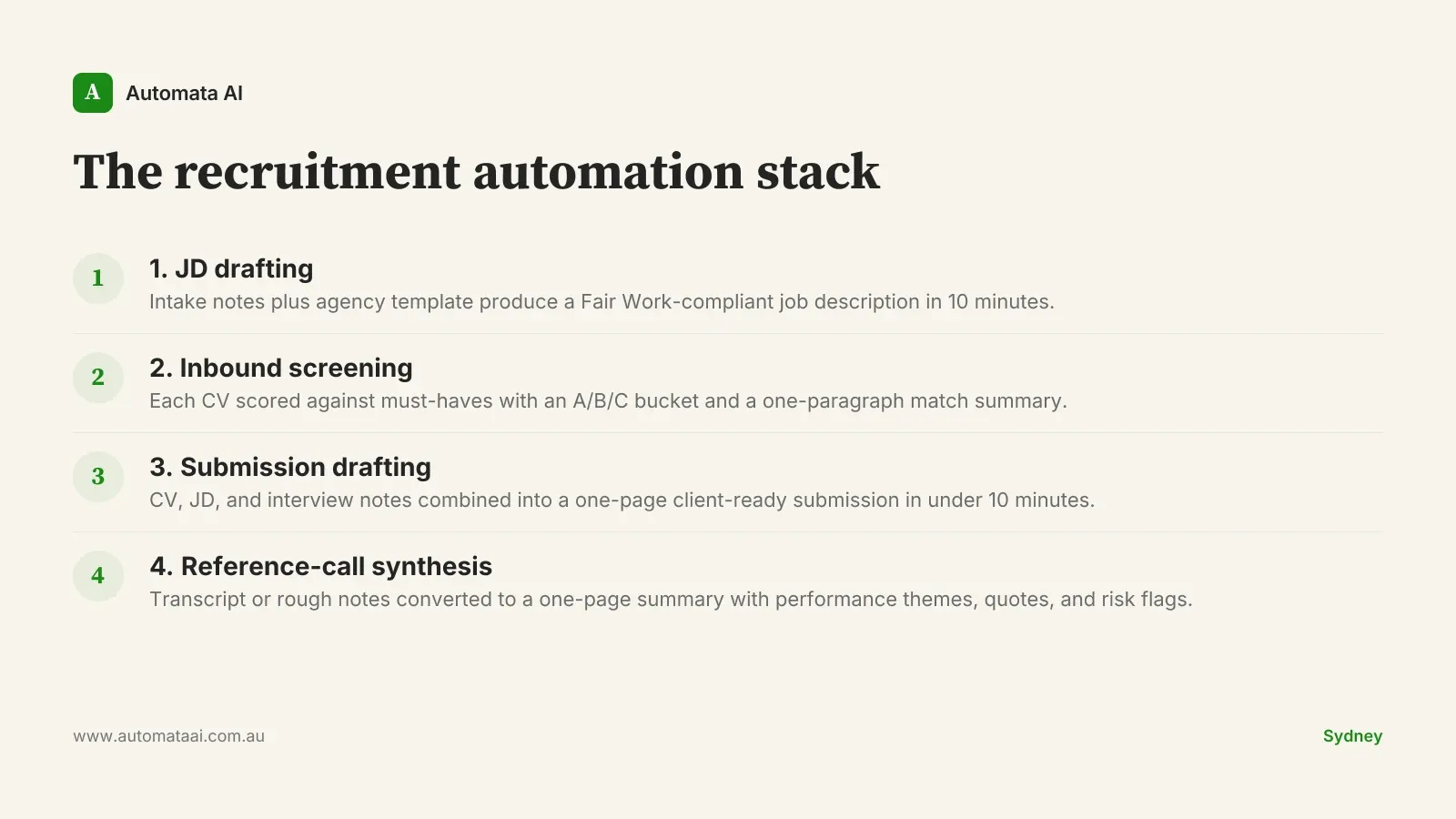

The recruitment automation stack

1. JD drafting

The intake call produces a set of notes: the role title, the must-haves, the nice-to-haves, and the compensation band. Without a Skill, the consultant translates those notes into a polished job description by working from memory against a blank document. With a Skill, the notes feed directly into a prompt that already knows the agency's house template, the tone expected on SEEK and LinkedIn, and the discrimination-safe language required under the Fair Work Act 2009. Time per JD drops from roughly 45 minutes to about 10 minutes of consultant editing. The consultant still reviews and adjusts. They are no longer starting from zero.

2. Inbound screening

A role with 80 to 200 applicants produces a screening pile a single consultant cannot properly review in the time the role allows. A screening Skill reads each CV against the role's must-haves, produces a one-paragraph match summary, and assigns a bucket: A for immediate phone screen, B for consideration if As fall through, C for pass. The consultant reviews the As, samples the Bs, and skips the Cs. Once calibrated to the agency's actual standard for an A candidate in that discipline, throughput on screening climbs four to six times compared to manual review.

No automated rejections. Every C-bucket candidate receives a polite, human-reviewed reply. The Skill flags. The consultant decides.

No protected attribute reasoning. The Skill prompt must explicitly prohibit analysis based on age, gender, ethnicity, disability, or any other attribute covered under Australian anti-discrimination law.

Full audit trail. Every match summary and bucket assignment is logged with the input that produced it, available for fairness review.

3. Submission drafting

A candidate submission to the client is part CV summary, part sales document. A submission Skill reads the CV, the JD, and the consultant's interview notes, and produces a one-page submission in the agency's standard format. The consultant adjusts the framing and adds the relationship context that only they have. Average drafting time drops from roughly 30 minutes to under 10.

4. Reference-call synthesis

Reference calls produce 10 to 25 minutes of unstructured conversation that most consultants never have time to write up properly. A synthesis Skill takes a transcript or rough notes and produces a one-page summary: key performance themes, direct quotes, and any risk flags worth surfacing with the client. The output is consistent across candidates. Consultants who previously skipped written reference summaries because of time pressure now produce one for every placement, which means clients receive better documentation on every hire.

When this is the wrong approach

Skills are not a fix for bad process. If the agency does not have a documented voice guide and at least three exemplar JDs in the right tone, the JD-drafting Skill has nothing to calibrate against and produces generic output. One Melbourne agency had 12 consultants writing JDs in slightly different styles for the same client. The Skill could not choose between them. The style problem had to be resolved first. If the screening criteria for a role are vague, the screening Skill produces vague summaries. Process discipline comes before automation.

Volume matters too. An agency placing fewer than eight to ten roles per month will not recover enough consultant time to justify a four-Skill deployment. Our AI Readiness Assessment is designed to surface this calculation before any build work begins, so the agency knows whether the numbers support automation before spending on implementation.

The compliance layer is not optional

The Fair Work Act 2009, the Racial Discrimination Act 1975, and the Sex Discrimination Act 1984 all apply to the hiring process in Australia. If a Skill is involved in screening CVs or summarising reference checks, the prompt architecture must actively prevent protected attribute reasoning from influencing the output. This is not a disclaimer to add at the end of the design document. It is a constraint that shapes every prompt from the first day of deployment, reviewed alongside the agency's existing equal employment opportunity policy.

An agency that adds AI to a broken compliance posture does not improve its risk profile. It amplifies it.

Skill design, prompt review, and compliance documentation are standard components of our AI Automation Services for the recruitment sector. Every deployment includes an audit log, with Skill outputs and their inputs recorded and retrievable. If a candidate or a regulator asks for the reasoning behind a screening decision, the agency can show it.

The four-hour problem is not a technology problem. The tools exist and the economics work. The decision is whether the agency has the process discipline and the compliance architecture to deploy them responsibly. Get both right and the capacity returns quickly. Get one wrong and the risk grows faster than the saving.