The SWE-Bench results for Claude Sonnet are public. If you run engineering at an Australian mid-market firm and haven't looked at them yet, you're setting your 2026 AI tooling budget on assumptions that are at least two model generations out of date.

That's not a minor detail. SWE-Bench has become the most defensible proxy the industry has for whether a model will actually help your team ship code. And Sonnet's recent numbers have shifted three things that matter specifically to Australian engineering buying decisions.

What SWE-Bench actually tests

SWE-Bench takes real GitHub issues from real open-source projects and asks the model to produce a patch that fixes them. The patch has to apply cleanly and pass the project's existing test suite. No curated tasks. No synthetic exercises. No partial credit for a patch that almost works. A resolved issue means the model read the codebase, reasoned about the bug, and wrote code that the test suite validates.

This is different from most AI coding benchmarks, which measure something closer to autocomplete quality. SWE-Bench measures something closer to what your engineers actually do: read context, diagnose a problem, produce a fix, verify it. Teams evaluating AI coding tools on toy problems and synthetic tasks are measuring the wrong thing. When the buying decision is real, use the benchmark that is too.

Most AI coding benchmarks are gameable. SWE-Bench is harder to game because the test suite is the judge, not a human rater.

What the Sonnet numbers mean for your stack

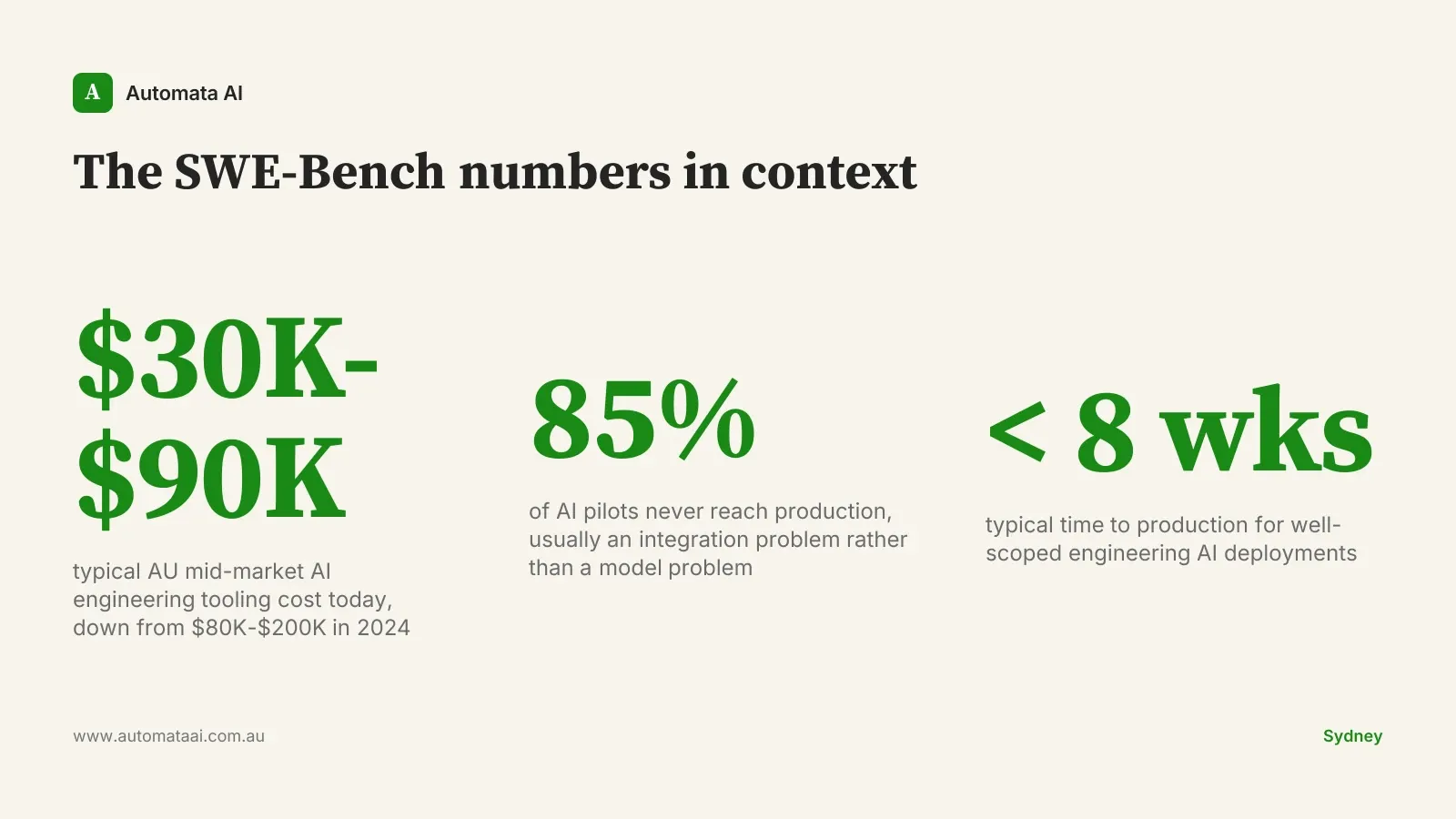

The specific percentage matters less than the direction. Sonnet's performance on the harder verified-difficulty subset has been climbing steeply across recent releases, and it now sits materially above what you would expect for its cost tier. Sonnet punches above its weight class, which is where the buying decision becomes consequential.

A few model generations ago, serious agentic engineering work required Opus. That gap has closed. Sonnet now handles a meaningful fraction of what used to require the most capable model, at a fraction of the inference cost. For Australian mid-market engineering teams building or buying AI tooling on real budgets, this is not a footnote in a release announcement.

For Sydney engineering teams specifically, this means the annual cost of a well-scoped AI engineering tooling deployment has dropped from roughly $80,000-$200,000 in 2024 to $30,000-$90,000 today for equivalent capability. Projects deferred because the model cost made the business case marginal are worth re-modelling now.

Three shifts in the Australian engineering buying decision

These are not predictions about where the technology is going. They are the direct consequence of where Sonnet sits on SWE-Bench right now.

The shift matters most to teams that set their AI infrastructure choices in 2023-2024 and have not revisited them since. The gap between Sonnet and Opus that justified a blanket policy of using Opus for anything serious has narrowed to the point where it is now a per-workload question, not a standing rule.

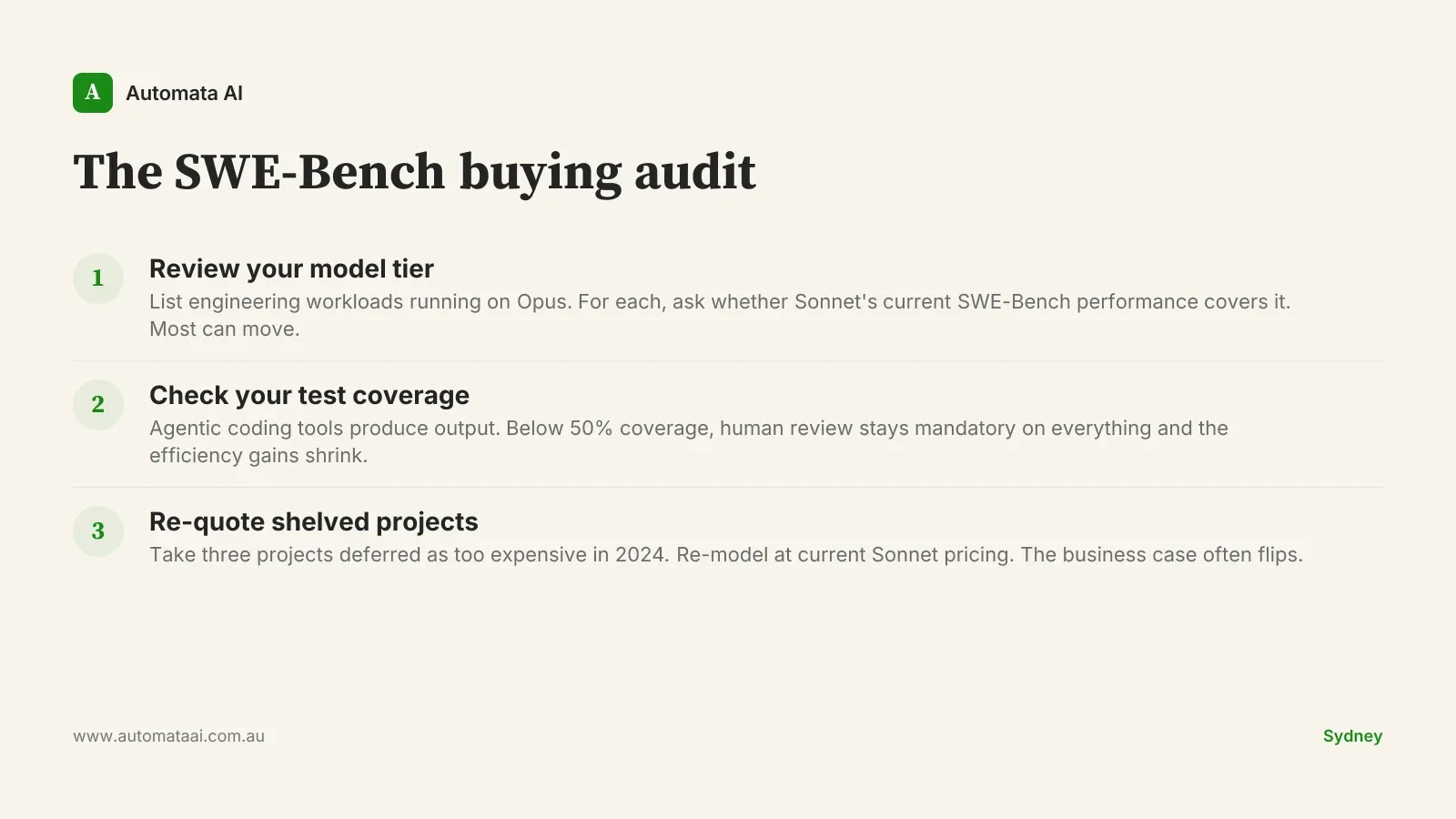

The model-tier default has moved. Teams still routing all engineering workloads to Opus are paying a premium that does not buy meaningful capability uplift for most tasks. Audit your usage before your next renewal. Our AI Automation Services engineering engagements start with exactly this review.

The build-vs-buy threshold for internal tooling has dropped. When Sonnet can resolve real GitHub issues at its current rate, custom internal AI tooling is cheaper to build than most 2024 quotes suggested. Use the ROI Calculator to re-model three shelved projects at current Sonnet pricing before writing them off.

Junior-engineer work is changing faster than most headcount plans account for. SWE-Bench-style tasks, boilerplate, straightforward integrations, simple bug fixes, are historically the work assigned to junior engineers. Australian engineering managers planning 2026 headcount without factoring AI-augmented junior output are going to be surprised by the maths.

When SWE-Bench performance doesn't translate to your team

The benchmark is legitimate but it is not universal. SWE-Bench evaluates performance against open-source projects with existing test coverage and documented issue descriptions. Most internal codebases are neither of those things.

If your engineering org has sparse test coverage, a large legacy codebase with poorly documented abstractions, or heavy domain specificity (compliance workflows, bespoke ERP integrations, financial services logic under ASIC or APRA prudential scrutiny), you will see lower practical performance than the benchmark suggests. The model produces code against the context it can read. In a 400,000-line proprietary system with undocumented business rules, that context is thin. The benchmark does not know your business. Your engineers do.

Test coverage below 50%. Agentic coding tools produce output but cannot validate what the test suite cannot catch. Low coverage means human review stays mandatory on everything the model touches, and the efficiency case weakens materially.

Heavy domain specificity without retrieval augmentation. APRA-regulated workflow logic, custom compliance engines, and bespoke financial models require business context the model does not carry by default. Do not assume benchmark performance without building the retrieval layer first.

First agentic deployment without context management scaffolding. Starting with an agentic coding setup before solving context supply is a fast path to low-quality output and developer frustration. Get the scaffolding right before scaling the workload.

The 2026 engineering AI checklist for AU teams

Concrete moves worth making this quarter. Not a roadmap. A short list of decisions with near-term payback.

Audit which engineering workloads default to Opus and model the cost delta at Sonnet capability.

Re-quote three previously-deferred internal tooling projects using current Sonnet pricing as the baseline.

Check codebase test coverage before deploying agentic coding tools. Below 50%, build the test suite first.

Brief engineering managers on SWE-Bench-grounded expectations before setting junior headcount plans for 2026.

The engineering teams that recalibrate their AI tooling investment against current model capability this year will carry a structural cost advantage into 2027. The benchmark moved. The buying decision should move with it. If you want to work through what that shift means for your specific stack and team structure, the AI Readiness Assessment is the right starting point.