Most Australian government departments approaching a mid-2026 AI tooling decision assume the answer is already written somewhere in their Microsoft Enterprise Agreement. It often isn't.

Both Microsoft Copilot and Claude meet the basic procurement gates. The meaningful differences sit in data residency architecture, sub-processor disclosure practices, and total cost at scale. A 5,000-seat deployment decision can shift by $4M to $7M AUD over three years depending on which vendor's model fits the actual usage profile.

That's the decision. Here's how to make it.

Procurement paths for both vendors

Microsoft Copilot M365 sits inside most whole-of-government enterprise agreements already. For departments with active Microsoft 365 Business Premium or E3/E5 subscriptions, the Copilot add-on is a line item approval within the existing Microsoft relationship, not a new procurement panel submission.

Claude is available two ways. Via the Anthropic API directly, which processes data in US regions only as of mid-2026. Or via AWS Bedrock, where Claude 3.5 and Claude 3 models run inside the Sydney AWS region under AWS's standard data processing terms. Most Australian agencies already hold an active AWS panel arrangement, which means Bedrock access is a line item, not a fresh vendor registration.

The practical implication: neither vendor creates a procurement-from-scratch problem. The decision shifts to compliance fit and capability match.

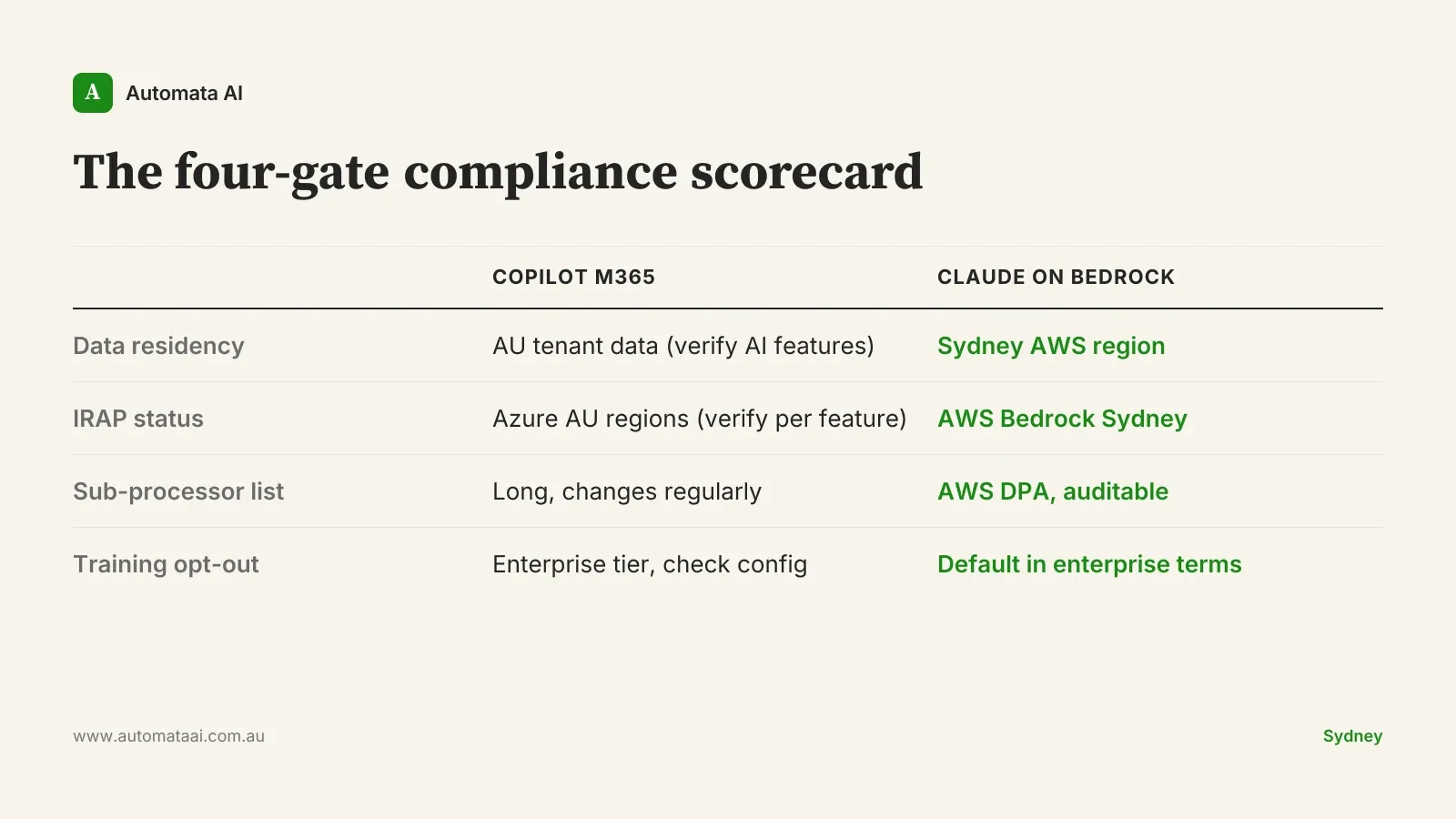

The four-gate compliance test

For Australian public sector work, four gates determine whether an AI product can handle the classification level being considered. Not every agency needs all four at the same threshold. A Department of Finance internal tool carries different requirements than an intelligence-adjacent workflow. But every procurement team should score them explicitly rather than assuming the vendor's marketing materials cover the gap.

Data residency. Under PSPF Policy 8 and the ISM, PROTECTED data must remain onshore or in a government-certified facility. AWS Bedrock in Sydney satisfies this. Copilot M365 has Australian data residency commitments for tenant data, but the processing path for AI features needs verification against the agency's specific classification requirements. Direct Anthropic API does not currently provide Australian data residency.

IRAP assessment status. AWS Bedrock has IRAP assessment for the Sydney region. Microsoft Azure also has IRAP-assessed regions in Australia. If IRAP is a hard gate, both paths have viable routes. Verify the specific feature being assessed, not just the underlying cloud region.

Sub-processor disclosure. Microsoft's sub-processor list is long and updated regularly. Via AWS Bedrock, Anthropic's data handling is governed by AWS's data processing terms, which are detailed and auditable. For agencies reporting on sub-processors under their own privacy frameworks, the Bedrock path is cleaner to document.

Training opt-out defaults. Anthropic's enterprise agreements include no-training-on-customer-data commitments by default. Microsoft's Copilot includes this under enterprise terms, but configuration defaults vary across licensing tiers. Verify the active tier before assuming the protection is in place.

Where each vendor actually wins

Copilot's advantage is depth of M365 integration. If the department's primary AI use cases are drafting in Word, summarising policy documents in Outlook, generating meeting notes in Teams, and querying SharePoint content, Copilot is the more direct path. Microsoft owns both ends of the workflow, and the integration is tight in ways that custom builds take months to replicate.

Claude's advantage is breadth outside Microsoft's perimeter. Custom document review for regulatory submissions, large-scale policy analysis across thousands of pages, agentic pipelines that span case management and finance systems, and developer tooling via Claude Code. A development team of 50 using Claude Code can ship internal automation in weeks that would otherwise take quarters. Claude Code has no equivalent in the Copilot product line.

The honest framing: these products aren't competing on the same terrain. The question is which terrain your agency's work actually sits on.

A useful filter: what percentage of the intended AI workload happens entirely inside Office, Teams, and SharePoint? Above 70%, Copilot wins on integration. Below 50%, Claude or a hybrid is worth the analysis. Most of the work we do across our AI Automation Services starts with exactly this question.

When Copilot is the right call

This is the section most comparison posts skip. Copilot is the better procurement decision in several real scenarios, and pretending otherwise is vendor advocacy.

If the department's primary use case is productivity augmentation for non-technical staff (drafting, summarising, meeting notes) and Microsoft 365 Business Premium is already in place, the Copilot add-on at roughly $40-$50 AUD per user per month is low-friction procurement. For 500 seats, that's $240,000 to $300,000 annually with near-zero integration overhead. For that narrow use case, the argument for a different path is thin.

The economics shift when usage is API-driven. A 2,000-user deployment where most AI interaction happens through custom-built internal tools (query systems, document classifiers, reporting engines) costs materially less per output unit on Claude via Bedrock than on a per-seat Copilot licence. Run the three-year numbers against actual anticipated call volumes in the ROI Calculator before the decision gets anchored to sticker price.

There is also a valid case for running both. Some agencies deploy Copilot for productivity staff and Claude for development teams and data analysts. This is not hedging. It is tool matching. The governance overhead of two AI vendors is real but manageable when both procurement paths already exist.

Scoring the decision

No single checklist covers every agency profile, but five questions surface the answer quickly.

What percentage of planned AI work happens inside Office, Teams, and SharePoint?

What is the data classification level of the primary use case?

Is developer tooling or custom workflow automation in scope?

What is the three-year volume at realistic usage rates, measured in seats or API calls?

What are the sub-processor and audit log requirements from the agency's privacy team?

Score these before opening a vendor deck. The vendor comparison follows from the answers, not the other way around. If you need help mapping this to your agency's workload and classification profile, our AI Readiness Assessment covers the comparison as part of a focused two-week engagement.

The numbers are not hard to run. The mistake is skipping them when a Microsoft renewal is already sitting on the table.