Gemini Embedding 2 is now GA. Your engineering lead forwarded the benchmark sheet this morning. The benchmark numbers hold up. The per-call pricing is competitive at enterprise volume. And now you're weighing whether to rebuild your Claude RAG pipeline's embedding layer around it.

For most Australian teams, the answer is no. But the answer changes if your corpus is multilingual, or if you're paying the chunking tax on long documents. The workload type determines the right call. Getting it wrong in either direction carries a real cost: either the migration cost you didn't need, or the retrieval quality penalty from staying put when the alternative is genuinely better.

What Gemini Embedding 2 actually does well

Three honest strengths, stated without the vendor positioning:

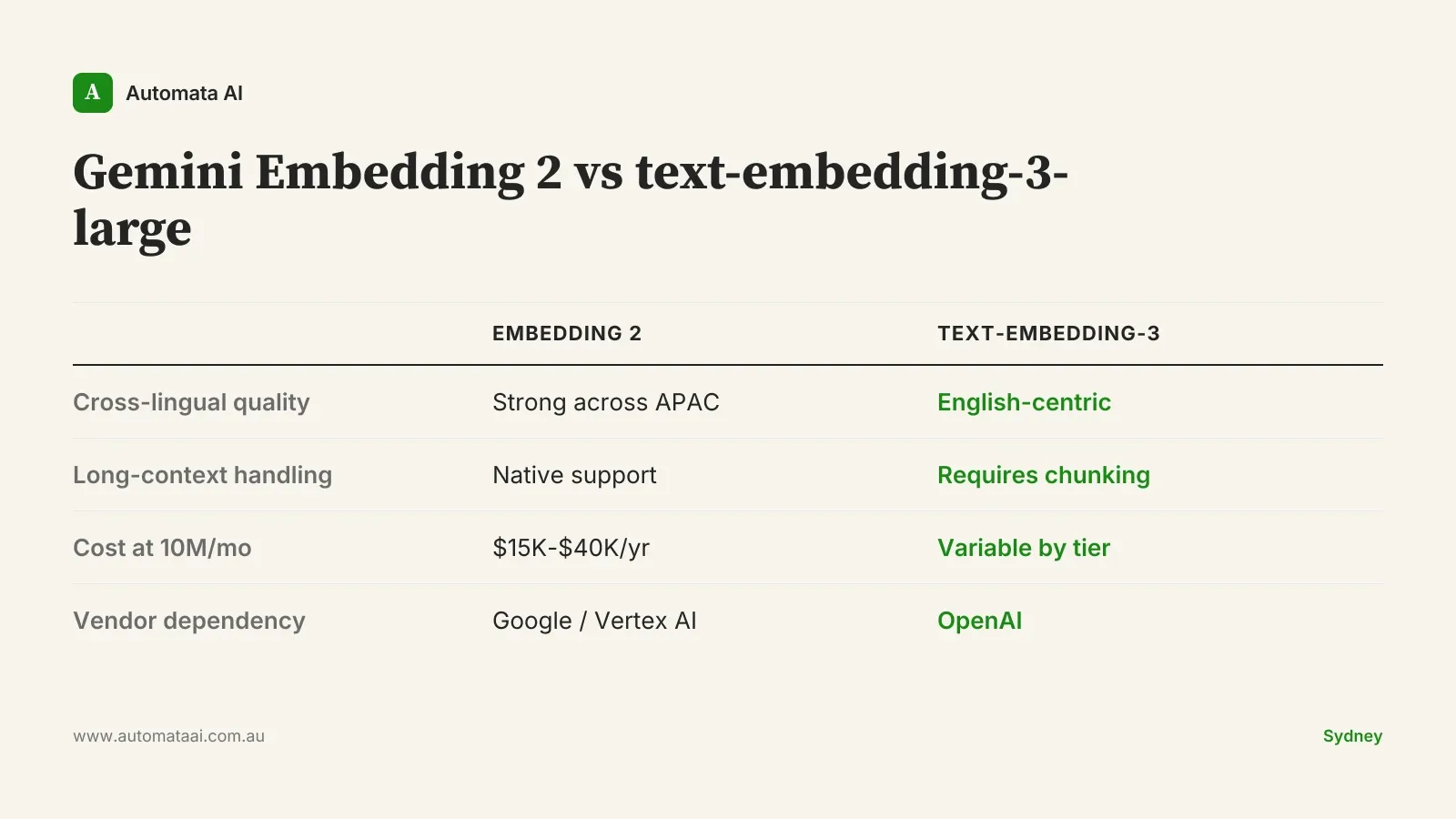

Cross-lingual retrieval. Embedding 2 holds up well across APAC languages. Mandarin, Bahasa Indonesia, and Vietnamese alongside English. For AU companies retrieving content across multiple Asian markets, the difference is measurable. English-centric embedding models consistently return degraded results on non-English content. Embedding 2 addresses this at the model level, not through preprocessing workarounds.

Long-document handling. Most embedding models degrade past a few thousand tokens, which forces chunking logic into your pipeline. Embedding 2 handles long documents more natively. For Australian legal, medical, or technical document corpora, this reduces pipeline complexity and the ongoing maintenance overhead of managing chunk overlap and boundary conditions.

Per-call pricing at volume. A typical Sydney enterprise running 10 million embeddings per month lands in the $15,000 to $40,000 annual range. Competitive with the OpenAI alternatives most Claude stacks currently use.

The category error most Australian teams are making

When engineers frame this as Gemini Embedding 2 vs Claude, they've already misread the stack. Anthropic doesn't ship a first-party embedding model. Your Claude RAG pipeline almost certainly uses OpenAI's text-embedding-3-large, Cohere Embed, or an open-source alternative. Claude handles generation. The embedding layer is a separate component that can be swapped independently.

That distinction matters. This is a component decision, not a platform decision. Switching to Gemini Embedding 2 doesn't affect your generation model, your orchestration layer, or your vector store. You're changing one piece of the pipeline, with one set of switching costs to model and one performance baseline to beat. The rest of your Claude investment stays untouched.

The three-workload RAG embedding decision

Based on our engagements with Australian enterprise RAG teams, the decision splits across three workload types. We call this the RAG Workload Filter: a pre-pilot screen to apply before committing any re-embedding budget. If you're unsure which type describes your corpus, start with an AI Readiness Assessment before running any benchmarks.

English-only AU corpora, moderate document sizes. Stay with your current solution. Re-embedding a typical mid-market knowledge base costs $30,000 to $80,000 when you account for engineering time, pipeline updates, regression testing, and compute. Against that outlay, the quality uplift on English retrieval is under 5% on most standard benchmarks. The switch cost doesn't recover within any reasonable timeframe.

Multilingual or APAC-language corpora. Pilot Embedding 2. The cross-lingual performance difference is measurable and material for AU companies retrieving across multiple languages. Establish a retrieval quality baseline on your actual production documents first, then run the comparison on the same corpus.

Long-document corpora: legal, medical, technical manuals. Pilot Embedding 2. The reduced chunking complexity can simplify your pipeline architecture and lower ongoing maintenance cost. Measure retrieval accuracy before and after on your real documents before committing.

What APRA-regulated teams need to check first

Gemini Embedding 2 runs through Vertex AI. Google Cloud Australia has regions in Sydney and Melbourne, so if your data already flows through GCP under an approved outsourcing arrangement, the path to a pilot is relatively clear. You're not adding a new geographic boundary. You're adding a new service within an existing arrangement.

AWS-only stacks require a more careful look. A large portion of Australian financial services runs on AWS, and introducing Vertex AI creates cross-cloud data movement. Under APRA CPS 230, material outsourcing arrangements covering data processing require board-level approval and formal vendor due diligence. A quick embedding pilot is not quick if legal and risk haven't cleared the arrangement. Build that compliance pathway into your timeline before running a single benchmark. We cover the full compliance posture for AI infrastructure decisions in our Australian financial services AI guide.

When the switch is the wrong call

The English-only case is the clearest no. Standard RAG benchmarks put Embedding 2 and text-embedding-3-large within 3-5% of each other on English retrieval tasks. That gap is within measurement noise for most real-world applications. It doesn't justify a $30,000 to $80,000 re-embedding project. If someone on your team is pushing for the switch on English corpora, ask them to model the payback period. The number won't support the case.

The teams that gain the most from Embedding 2 are the ones whose current solution is genuinely underperforming on multilingual or long-document tasks. If neither describes your situation, the embedding layer is not where your RAG quality problems live. Improving generation quality, context window strategy, and retrieval evaluation will deliver more lift per dollar than any embedding model swap. Automata AI runs head-to-head embedding pilots for Australian enterprise teams, including switch-over cost modelling and data residency analysis. See our AI Automation Services to understand how we structure these engagements.

The benchmark sheet tells you Embedding 2 is capable. It doesn't tell you whether the switch makes sense for your corpus, your cloud posture, or where your retrieval quality is actually falling short. Apply the RAG Workload Filter. Run the pilot on the right corpora. Model the switch cost before the pilot starts, not after.