A fintech team in Sydney spent three months building a customer-facing voice assistant on Gemini. Latency was good. The output audio was clean. When test queries were short and predictable, like account balances and branch hours, it worked well.

Then they tried handling a complex superannuation enquiry mid-call and the reasoning fell apart.

They're now six weeks into rebuilding on Claude paired with a TTS provider. Neither stack decision was wrong in isolation. The first was wrong for that workload.

That's the real frame when choosing between Gemini's voice stack and a Claude-based approach. Not which sounds better in a demo. Which one matches what you're actually asking the AI to do.

What Gemini Flash TTS brings to the table

Google's Gemini Flash TTS is built for speed. Sub-second synthesis, natural-sounding output, multiple speaker voices, and native integration with Gemini's reasoning layer. The combined stack is designed to work together, which means lower latency, simpler infrastructure, and a single billing relationship.

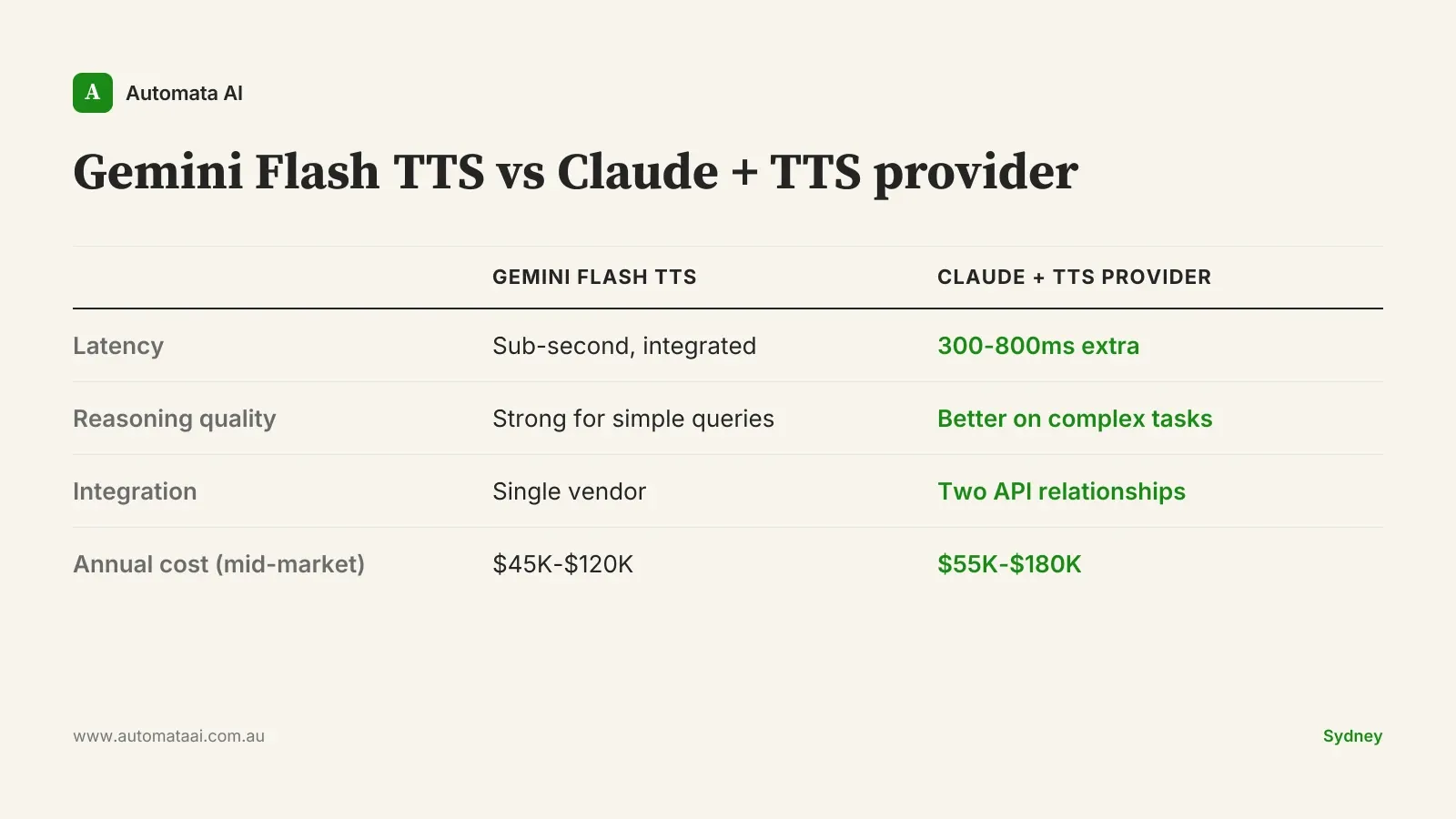

That integration also reduces the surface area for things to break in production. Fewer API hops, fewer failure modes, and monitoring that doesn't require threading data across two vendor consoles. For Melbourne retail teams running automated store enquiry lines, or any organisation handling a high volume of short, predictable queries, the tight single-vendor stack is a genuine operational advantage.

The per-request pricing is also competitive. At volumes above 200,000 monthly requests, Gemini Flash TTS can come in meaningfully cheaper than a modular Claude-plus-TTS approach.

Where Claude-based voice stacks lead

Claude doesn't ship a first-party TTS model. A Claude voice stack means Claude for reasoning paired with a TTS provider: ElevenLabs, Cartesia, OpenAI TTS, or sometimes Google's own synthesis via Vertex AI. Modular by design.

The value lives in the reasoning layer. Claude's ability to follow complex multi-step instructions, use tools mid-conversation, and hold coherent context across a long call is where the performance gap shows. For voice interfaces where the AI needs to retrieve and reason over documents, answer detailed questions, or hand off to a human with a complete summary, Claude-based stacks produce better outcomes on the tasks that matter.

The flip side of that complexity is flexibility. You can swap TTS providers without touching the reasoning layer, which is useful if pricing shifts, if you need a different voice library, or if a provider's data residency terms change. The reasoning and voice layers are independently upgradeable.

The tradeoff is real engineering overhead: two API relationships, two latency legs, two billing lines, and the observability tooling to monitor all of it. That cost belongs in the business case.

Three workload patterns and how to pick

Most Australian voice AI deployments fall into one of three patterns:

Short-answer interfaces. Simple queries, predictable intents. Gemini Flash TTS plus Gemini reasoning is the right call. Latency advantage is real, integration is tight, and you're not asking the model to do anything reasoning-intensive. Single-vendor stack wins.

Conversational interfaces with complex queries. Policy interpretation, complex account enquiries, anything touching regulated financial products. Claude plus a TTS provider wins. An Australian financial services team handling APRA-regulated product enquiries by voice cannot afford hallucination in the reasoning layer, and the quality gap is real.

Internal tools and accessibility applications. Meeting transcription with action extraction, accessibility tools with summarisation, internal knowledge retrieval by voice. Teams already running Claude for document-based workflows typically extend that stack rather than introducing a second model.

When voice AI is the wrong choice

Not every voice deployment should proceed. The category gets a lot of enthusiasm right now, which means some teams are building voice interfaces for workloads that don't need them.

If your end-to-end latency requirement is under 400ms on a reasoning-intensive task, neither stack reliably delivers that today. TTS pipelines add 300 to 800ms on top of the underlying inference time. A well-designed typed interface will perform better for users who need fast answers to complex questions.

Voice data also carries obligations under the Australian Privacy Principles that teams consistently underestimate. Audio buffers, even temporary ones, are personal information. Before building, confirm your TTS provider's data residency, retention policy, and whether audio feeds model training. For businesses in financial services, healthcare, or professional services, get legal sign-off on the voice data flow before going to production. OAIC enforcement has reached mid-market organisations.

If the compliance review budget isn't there, don't build a voice interface to cut costs on a typed one. It won't cut costs.

Cost framing for Australian teams

A mid-market voice deployment running 300,000 to 500,000 requests per month, typical for a Sydney or Melbourne firm in financial or professional services, runs $45,000 to $180,000 per year in inference costs. The range is wide because query length and session duration vary significantly across use cases. A customer service line answering simple questions sits at the lower end. A voice assistant that retrieves policy documents mid-call sits at the top.

Gemini Flash TTS is competitive on per-request pricing at higher volumes. A Claude plus third-party TTS stack typically costs 15 to 25 percent more per request, though the gap narrows if you already have Claude on contract with volume pricing.

The real cost difference often sits in integration and ongoing maintenance. Budget an additional $20,000 to $40,000 per year for the engineering overhead of managing a modular Claude-plus-TTS stack compared to a single-vendor Gemini deployment. That number is regularly absent from initial procurement conversations.

For most mid-market teams, the decision comes down to two questions: how reasoning-intensive is this workload, and do we already have Claude on contract? If the answer to both is yes, the incremental cost of adding a TTS provider is modest. If the answer to both is no, a single-vendor Gemini stack is likely cheaper and faster to deploy.

The pilot that gets rebuilt is expensive, both in time and in credibility with the team that signed it off. Map your voice workloads by latency sensitivity and reasoning complexity before committing to a stack. For any workload where the choice is unclear, a four-week head-to-head on real production queries costs far less than three months of rebuilding after the wrong call.