gpt-image-2 hit number one on the Image Arena leaderboard and that was enough for half your team to start arguing you should switch. The capability is real.

For most Australian marketing and product teams generating images at scale, the answer depends less on the model and more on whether you're solving a generation problem or a workflow problem. Those are different things. The model is already good enough for most production use cases. The workflow is where most teams are actually losing time.

What gpt-image-2 actually delivers

Three improvements are worth naming directly, because they map to specific production constraints Australian teams actually run into. Not all of them will matter for your workflow, and that is the honest framing.

Text rendering is materially better. Generated images with visible text (signage, product labels, UI mockups, localised ad creative) come out cleanly. Previous-generation models failed this routinely. If you've watched a designer spend 45 minutes correcting a generated image because the text was garbled, you know the cost. Any workflow that includes images with readable text should run a pilot.

Multilingual prompt fidelity matters for APAC distribution. Australian companies operating across Southeast Asia and East Asia have been let down by image models that handle English prompts well but produce degraded output in Mandarin, Bahasa Indonesia, or Japanese. gpt-image-2 is notably more accurate here. For teams distributing visual content from Sydney or Melbourne into APAC markets, this is a concrete operational improvement, not a feature-sheet talking point.

Resolution and aspect-ratio flexibility reduces post-processing steps. Native support for multiple aspect ratios up to 2K means fewer manual crops and resizes between generation and production placement. For a team running 3,000 to 5,000 images per month, those steps can add up to 15 to 20 hours of production time monthly.

The cost reality for Australian production teams

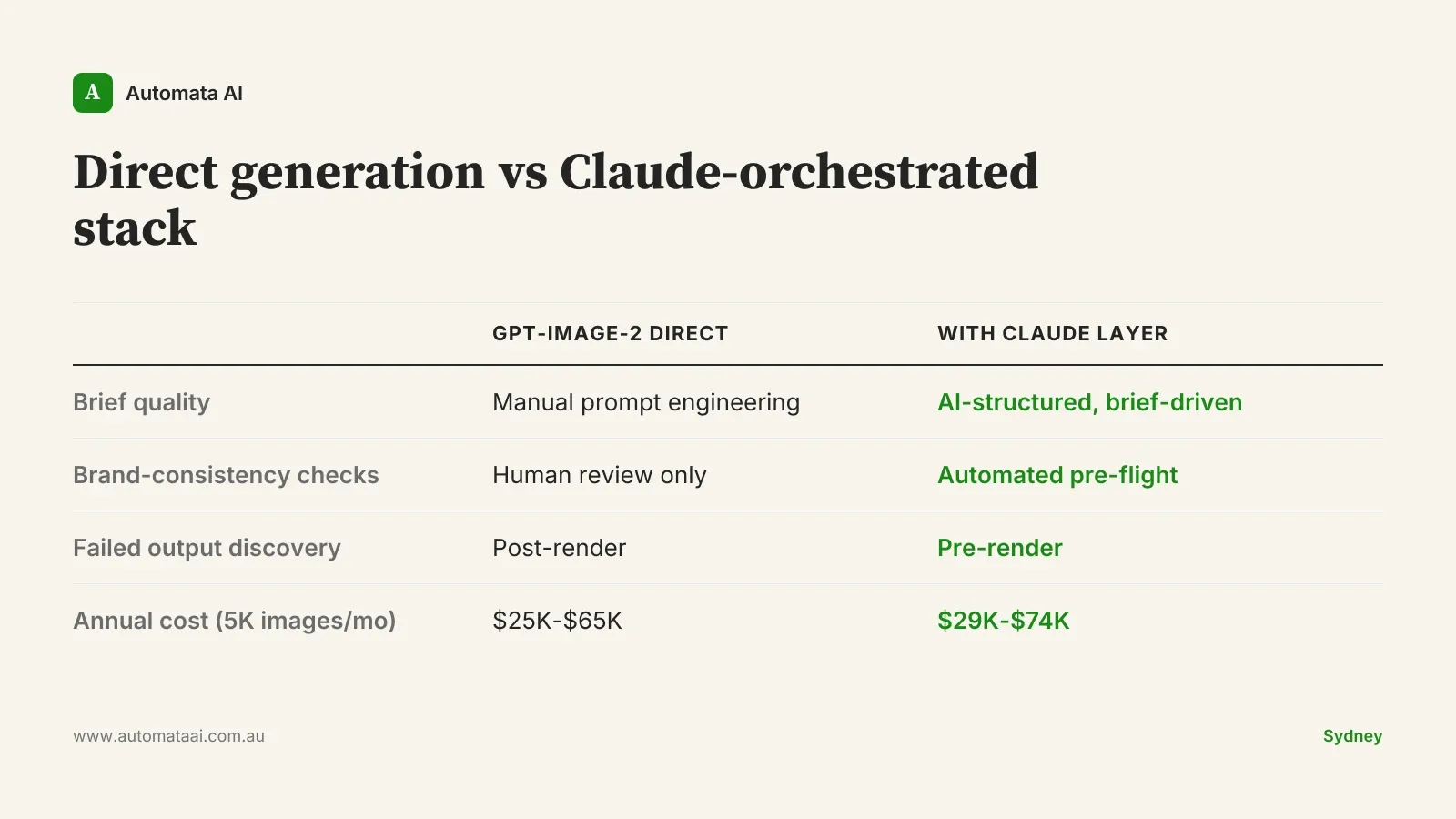

If your team is generating 5,000 production images per month, annual API costs with gpt-image-2 sit roughly in the $25,000 to $65,000 range, depending on resolution tier and quality settings. That's the direct API line item only. It doesn't include integration work, an orchestration layer if you build one, or the human review time for outputs that don't clear your quality bar.

The number that reframes the decision is your current cost per approved image. If a designer is spending 20 to 30 minutes per image on prompt iteration, output selection, and post-processing, and your team is producing 2,000 images per month, that's 40,000 to 60,000 minutes of designer time annually. At $85 to $120 per hour fully loaded, that's $57,000 to $120,000 in labour for generation and refinement alone. That's before you account for the time spent reviewing and discarding failed outputs. Most teams aren't tracking this cost accurately, which is why the API economics can look deceptively expensive until you model the full comparison.

Where Claude orchestration changes the calculation

Claude doesn't generate images. What it does is handle everything around image generation that determines whether the output is production-usable.

A brief-driven production loop works like this. The campaign brief enters Claude with your brand guidelines, placement specs, and copy requirements. Claude generates structured prompts for each placement, with aspect ratio, style constraints, and brand notes translated into precise image generation instructions. Those prompts feed into gpt-image-2. The outputs return through Claude for automated review against the original brief before a human touches the batch. The key is that brief structure is applied consistently across every prompt, not left to individual team members' judgment on how to write generation instructions.

The economics are straightforward. For 5,000 images per month, Claude API costs for prompt generation and output review add roughly $4,000 to $9,000 annually. If that layer catches 10 to 15 percent of outputs that would otherwise need designer rework at $85 per hour, the maths resolves quickly. You're not paying for Claude on top of gpt-image-2 because it's elegant. You're paying for it because it moves failure detection upstream, where it's cheap, rather than downstream, where it costs designer time.

When adding gpt-image-2 is the wrong call

Not every team should add this to their stack. The integration cost is real and the business case needs to be honest before you commit engineering time. Three clear signals to hold off:

Volume is under 500 images per month. The API economics don't justify the integration investment. A $15,000 to $25,000 integration project against $5,000 in annual API savings has a payback period that should embarrass any business case presented to a CFO.

Brand consistency is your primary constraint and you haven't built the brief layer. No image generation model produces brand-consistent output without guardrails above it. Adding gpt-image-2 to a workflow already drifting off-brand accelerates the problem. Solve the brief-quality and review layer first.

Your sector has unresolved AI imagery questions. Financial services firms operating under APRA CPS 230, or companies handling personal information under the Privacy Act 1988, need clarity on provenance and consent before distributing AI-generated visuals at scale. Run a sandboxed pilot with no production distribution until legal has signed off.

What to run first

If your conditions are right (volume above 1,000 images per month, brand guidelines documented, legal clearance in hand), a 30-day two-track pilot is the practical starting point. Thirty days is enough to generate a meaningful quality and cost dataset for both tracks before committing to an architecture.

Track one: run gpt-image-2 directly via API on your highest-volume, most templated workload. Product variants, social templates, localised assets with consistent structure. Track your quality clearance rate and cost per approved image.

Track two: take one brief-driven creative campaign through a Claude-orchestrated workflow. Provide Claude with your brand guidelines and the campaign brief. Let it structure the prompts. Compare the clearance rate and brand-consistency score against track one.

The gap between those two tracks answers the question your budget process will eventually ask: is the orchestration layer worth paying for, or is your workload simple enough that direct generation suffices. For most teams producing template-driven assets at scale, the direct track wins on cost. For teams producing brief-driven creative work across multiple channels, the orchestrated track almost always wins on brand consistency and rework rate.

The teams that extract the most value from gpt-image-2 are not the ones who adopt it earliest. They're the ones who've already built a workflow that can absorb a new generation layer without degrading brand consistency or increasing human review load. Build that workflow before you change the model. The model will keep improving. Your workflow is the variable you control.