The model tier question comes up early in any AI architecture conversation. GPT-5.5 Pro and Claude Opus 4.7 extended thinking are both pitched as the solution for hard reasoning workloads. The demos are convincing. The price gap is real. And most Australian production teams make the call before they have run a single comparison on their own data.

The right question is not which architecture is smarter. It is which architecture earns its cost on the workloads you actually run at production scale.

For a Sydney or Melbourne engineering team processing 40,000 to 60,000 high-stakes model calls per month, that distinction has a direct AUD figure attached to it. Getting the architecture wrong does not just affect quality. It affects the bill.

What the architecture difference actually is

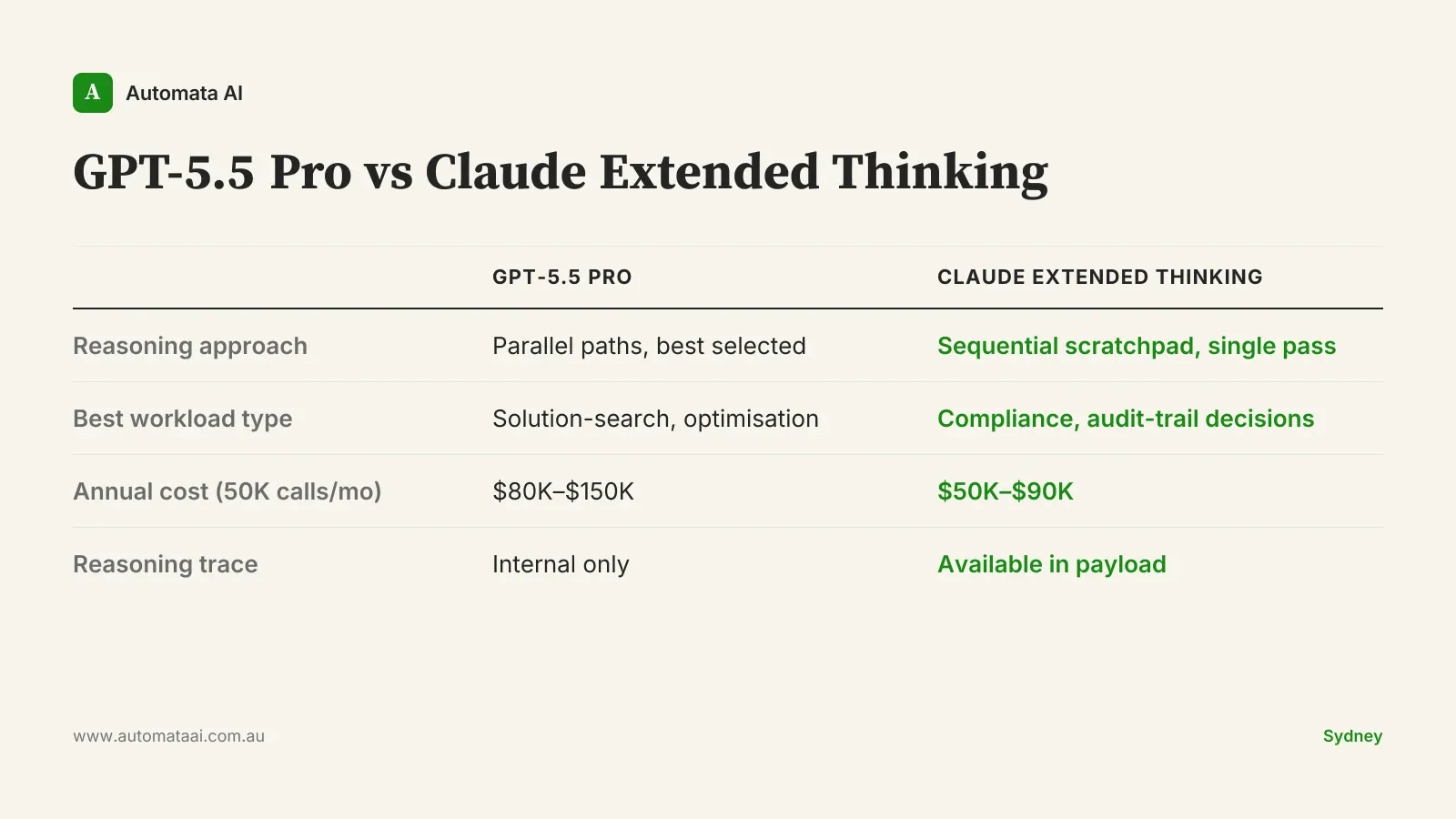

GPT-5.5 Pro uses parallel test-time compute. The model generates multiple reasoning paths concurrently, samples across them, and returns the strongest result. Latency is lower than serial deep thinking because paths run simultaneously. Cost reflects the compute multiplier: you are paying for multiple reasoning runs, not one.

Claude Opus 4.7 with extended thinking uses a sequential reasoning pass in a private scratchpad. The model works explicitly through a problem before committing to an answer. The full reasoning trace is available in the response payload. Latency is higher than the standard model. Cost scales with how long the model needs to think.

These are not equivalent approaches with different branding. They were optimised for different task structures.

Where each architecture earns its cost

Parallel exploration of the solution space

GPT-5.5 Pro's parallel architecture has a genuine advantage when the problem benefits from exploring multiple solution paths. Complex code refactoring where several valid approaches exist, combinatorial optimisation, mathematical proof verification. On these workloads you get diversity of reasoning, not just depth. The model samples a range of approaches and returns the strongest. Three engineers at a whiteboard produce better solutions than one engineer thinking harder. GPT-5.5 Pro is the computational version of that. The quality lift is real, but it is workload-dependent.

Structured transparent reasoning

When the value is in the working, not just the answer, extended thinking changes the calculation. APRA CPS 230-sensitive assessments, credit decisions requiring an audit trail, protocol matching in regulated healthcare. These workloads need reasoning that a risk officer or compliance analyst can inspect, challenge, and sign off on. A parallel system that returns the best answer from five internal reasoning paths cannot easily surface how it got there. Extended thinking can. The model's chain of reasoning is available, structured, and reviewable. The trace is the product.

That distinction matters more to Australian financial services and regulated-sector teams than it does to most US product companies. A credit decision recommendation without a reasoning trace is harder to defend to an ASIC audit than one that shows its working. It is rarely surfaced in model comparisons written for American audiences, but it is material in an APRA-regulated environment.

What production workloads actually cost in AUD

At 50,000 high-stakes calls per month, the annual bills differ materially across model tier and reasoning mode.

Standard Claude Opus 4.7. $35,000–$65,000 per year.

Claude Opus 4.7 with extended thinking on relevant calls. $50,000–$90,000 per year.

GPT-5.5 (standard). $40,000–$70,000 per year.

GPT-5.5 Pro on relevant calls. $80,000–$150,000 per year.

GPT-5.5 Pro is the most expensive option by a material margin. On a $100,000 annual AI model budget, routing all reasoning calls through it leaves little room for hosting, tooling, integration infrastructure, and the workloads that do not need Pro-tier reasoning.

Extended thinking has a more predictable cost profile. GPT-5.5 Pro's parallel paths mean per-call cost can vary depending on how many reasoning paths the model samples. For production budgeting across a mixed workload, that variability has real planning implications. For teams operating on tight model budgets, extended thinking is the more forecastable choice.

When neither Pro tier is the right default

Most everyday production reasoning does not need parallel compute or an extended thinking pass. Document classification, structured extraction, routing logic, short-form summarisation. Standard Claude Opus 4.7 and standard GPT-5.5 handle these at a fraction of the cost. The 3x to 5x premium for Pro variants is not justified by the fact that a workload is business-critical.

The honest question is whether your specific workload has structural properties that either parallel exploration or transparent working actually improves. If the answer is that it sounds complex or high-stakes, that is not a sufficient business case. A convincing demo on curated test cases is not a production benchmark. Run an A/B evaluation on a representative sample of your actual production calls before committing to a tier. The quality gap on most tasks is smaller than the pricing gap.

A team spending $120,000 per year on GPT-5.5 Pro across all reasoning calls, when 60% of those calls would return equivalent quality from the standard model, is effectively spending $72,000 per year on nothing. That is not a hypothetical. It is the outcome of benchmarking Pro versus standard on workloads where reasoning diversity does not add measurable lift.

A practical decision framework for production architects

Before committing to a model tier, categorise your reasoning workloads by task structure, not by how high-stakes they feel.

Solution-search workloads. Open-ended code generation, optimisation with multiple valid approaches, mathematical proof verification. Pilot GPT-5.5 Pro on a representative sample. Measure quality against the standard tier on your own production data, not on published benchmarks.

Transparent-reasoning workloads. Compliance assessment, APRA-regulated decisions, audit-required outputs. Use Claude extended thinking. The inspectable trace is the feature, not a bonus.

Routine reasoning workloads. Classification, extraction, routing, summarisation, most document processing. Default to standard Opus 4.7 or standard GPT-5.5. Treat Pro and extended thinking as opt-in upgrades that need a business case, not defaults.

The teams that will control their AI costs over the next two years are the ones that resist the instinct to default to the most capable model on every call. Match the architecture to the task type. Default to standard. Upgrade with evidence.