The demo ran in 30 seconds. The production document review ran for nine hours. Both worked. Then the agent hit hour six on a new batch and started producing subtly wrong summaries. The kind that pass a quick scan but fail a compliance review.

The harness had no checkpointing, no context compaction policy, no graceful degradation path. By the time the Sydney engineering team caught it, two full batches had processed and the output had already reached three downstream systems.

What changes at hour three

A short-lived agent fails loudly. It times out, returns an error, or produces something obviously wrong. A long-running agent fails silently. It drifts across hours of work, accumulating context noise and compounding small errors into systematic quality degradation. There is no single obvious trigger. The error rate doesn't spike. The output looks plausible until someone audits it. By then, the downstream damage is already done.

The harness is what prevents these failure modes. Not the model. Not the prompt. Not the tool definitions. The harness.

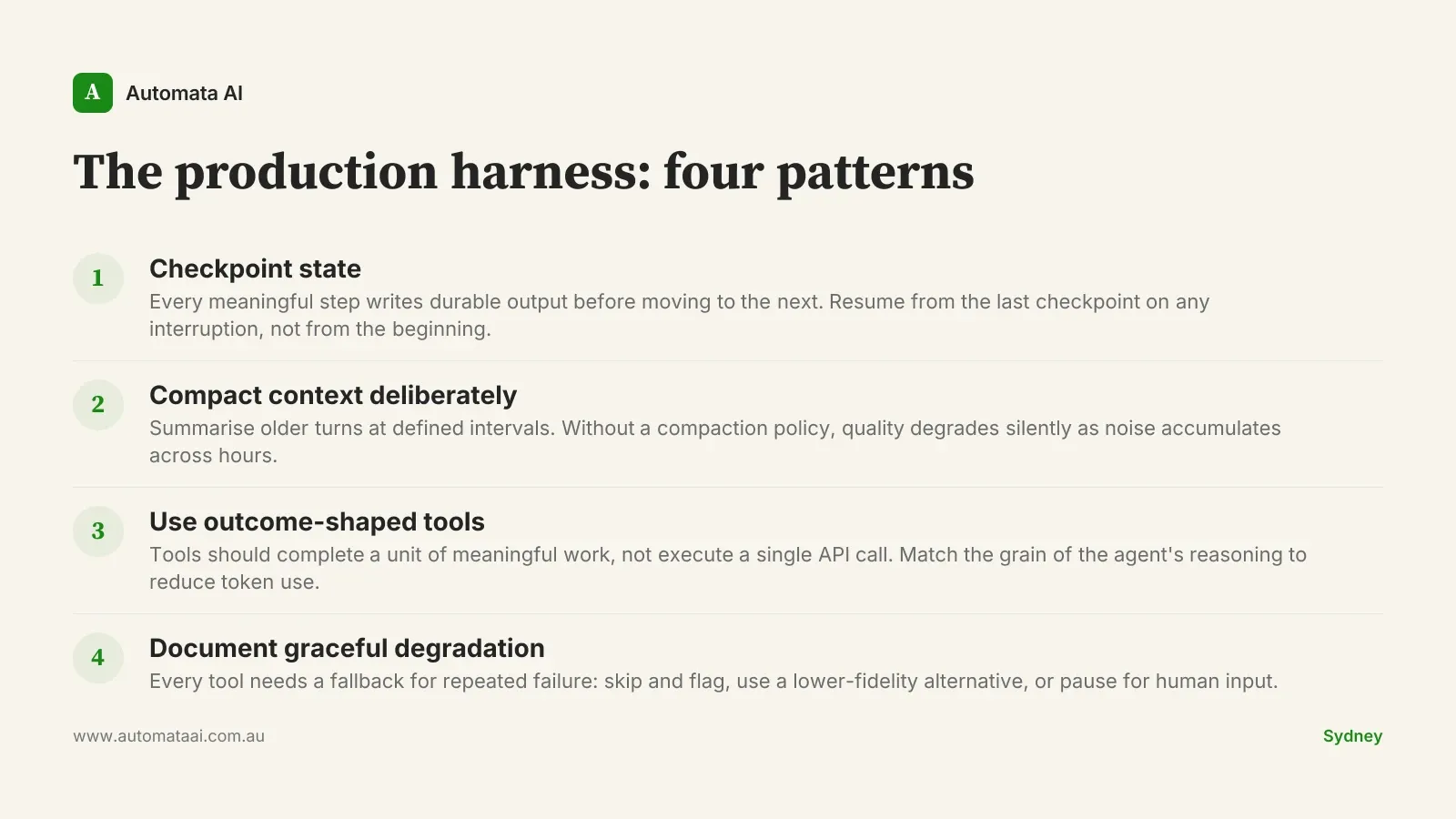

The four harness patterns

Four design patterns appear consistently in well-built long-running Claude agents. Together they form what we call the production harness. Each one targets a specific failure mode that only materialises at scale and duration.

Checkpoint state at every meaningful boundary. Every step that produces durable output writes to disk or a database before moving forward. If the agent processes 80 documents in a run, document 40 writes its result before document 41 starts. On any interruption (model error, network blip, explicit pause), the agent resumes from the last checkpoint, not from document 1.

Context compaction at deliberate intervals. Every N steps or M tokens, the agent summarises the conversation history: recent turns verbatim, older turns compressed. Without a compaction policy, a 10-hour agent spends its final hours reasoning through context that is 60 percent noise from completed sub-tasks. The quality drop is gradual, which is exactly why it goes unnoticed.

Outcome-shaped tools, not action-shaped tools. "Retrieve the five most relevant clauses from this contract" is an outcome. "Call the document search API with these parameters" is an action. Long-running agents reason at the outcome level. Tools that match that grain use fewer tokens, make fewer calls, and produce cleaner reasoning chains across extended runs.

Explicit graceful degradation paths. When a tool fails four times consecutively, what does the agent do? If the answer is retry indefinitely, you have a loop waiting to happen. Every tool the agent depends on needs a documented fallback: skip and flag, use a lower-fidelity alternative, or pause and request human input.

Harness design is one of the central deliverables in our AI Automation Services engagements. A well-designed agent with a poorly designed harness fails in production more reliably than a well-designed harness with an imperfect agent.

Cost economics for Australian production teams

A typical enterprise long-running agent covers research synthesis, document review, or multi-stage compliance checks. It runs between $200 and $1,500 per agent-day in Anthropic compute, depending on context window utilisation and tool-call frequency. A fleet of five to twenty active agents, which is the operating norm for mid-market teams serious about automation, translates to $25,000 to $400,000 in annual compute spend.

For organisations operating under APRA CPS 230, where audit trail and operational resilience requirements push toward more verification steps, the figure trends toward the upper end. Well-designed harnesses, based on our Sydney client engagements, typically reduce compute spend by 30 to 50 percent without affecting output quality. Run your own figures in the ROI Calculator to see where the cost lands against your agent's expected runtime and call volume.

When harness engineering is the wrong investment

Not every long-running agent needs this level of engineering. There is a real cost to building it. There is a bigger cost to building it prematurely. A harness built for a workload that doesn't justify it adds operational overhead without adding resilience. Three situations where the investment is not yet warranted:

Runtime under 30 minutes. Durable checkpointing adds infrastructure complexity without returning meaningful resilience. If the agent can restart in under a minute, retry on failure instead. Save the harness budget for agents that genuinely run long.

Low process frequency. An agent that runs twice a week on a small dataset doesn't need a context compaction policy. Solve the prompt and tool design first, then instrument once call volume justifies the operational overhead.

Pre-production or experimental workloads. Harness engineering belongs in the production path, not the validation phase. Don't build infrastructure around an agent that hasn't proven its business case in production conditions yet.

The harness readiness audit

Before deploying a long-running Claude agent to production, run these four questions. They form the core of the harness readiness audit. The full version is part of the AI Readiness Assessment, which covers harness architecture as one of five production readiness dimensions.

Can the agent resume from interruption? Not restart from scratch — resume from the last checkpoint. If the answer is no, the harness is incomplete.

When does it compact context? Every N steps, every M tokens, or never? "Never" means quality degrades silently on long runs. Pick a trigger, document it, measure against it.

Are your tools outcome-shaped? Step through each tool definition. If it describes an API call, it is action-shaped. If it describes a unit of meaningful work, it is outcome-shaped. Rewrite the action-shaped ones before they compound across hours of tool use.

What happens on four consecutive tool failures? If the agent retries indefinitely, you have a loop waiting to happen. Define a fallback for every tool and test the fallback paths before the first production run.

Silent degradation costs more than crashes. Crashes alert someone. An agent that produces 80-percent-right output across a nine-hour run, with no mechanism to surface the drift, will eventually cost more than the implementation ever saved. The businesses that build the harness before the first production incident are not being cautious. They are being practical. The harness is not the interesting part of the build. It is the part that determines whether the build survives contact with production.