GPT-5.5 landed in Australian enterprise inboxes on 23 April 2026. By the following morning, at least three Sydney-based strategy leads had forwarded it to their IT teams with the same question attached: should we switch? The email was not noise. GPT-5.5 is a real upgrade, with measurable improvements on tool use, Microsoft integration, and task handling at scale.

For teams currently running Claude Opus 4.7 in production, that question deserves a direct answer. Not a balanced vendor brief. Not a feature matrix that flatters both sides. The answer depends on which of three workload patterns describes your organisation, and most Australian enterprises will land clearly in one.

The mistake most teams will make is treating this as an enterprise-wide binary. That framing is how you end up spending $300,000 on a migration that improves one use case and breaks two others.

Where GPT-5.5 genuinely pulls ahead

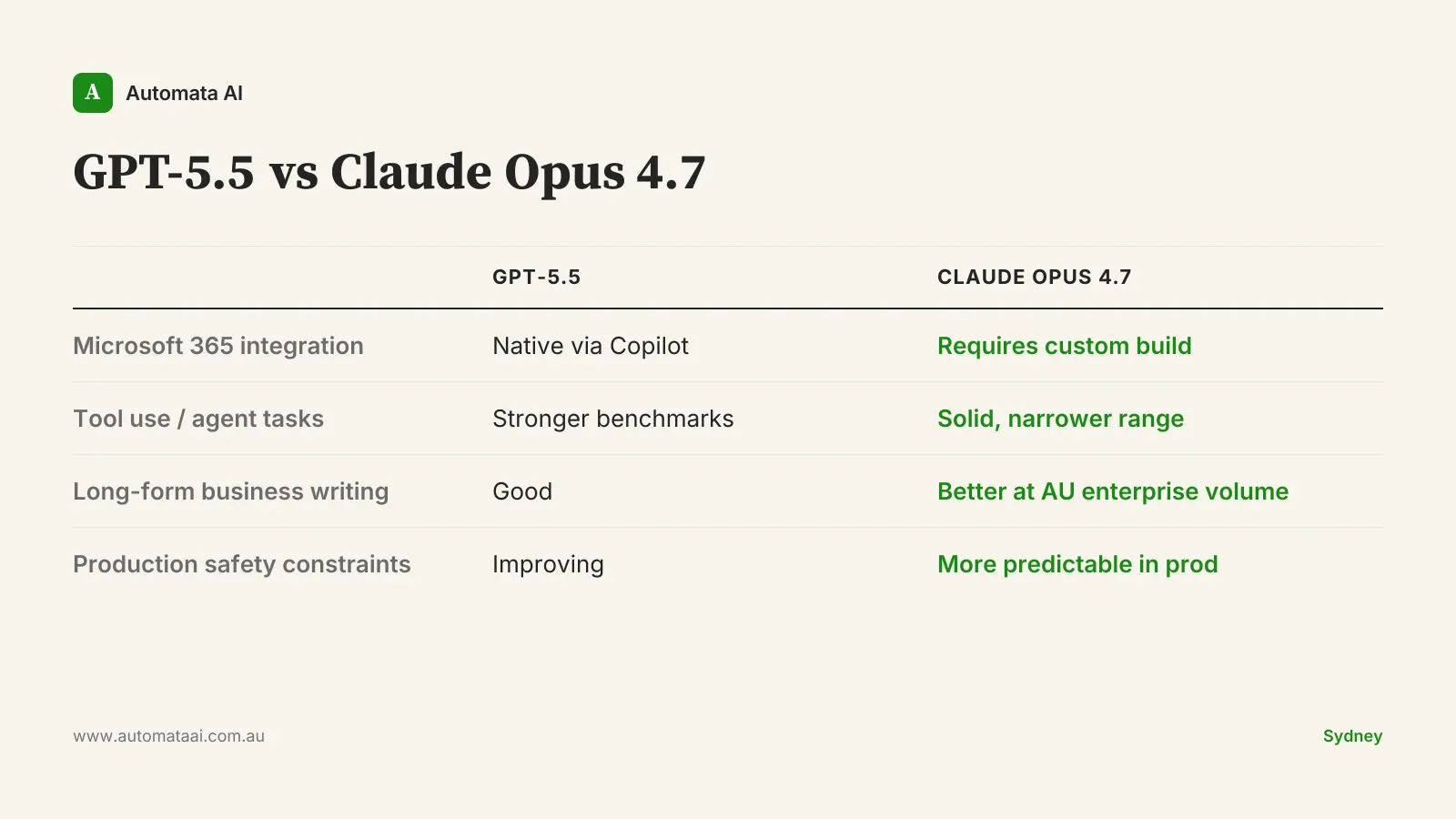

Microsoft 365 native integration. GPT-5.5 arrives inside Microsoft Copilot as a direct model upgrade. For Australian enterprises already running M365 at scale, the capability lands natively across Teams, SharePoint, Outlook, and Word. No re-architecture. No new vendor procurement. If your team's primary AI surface is the Microsoft productivity suite, GPT-5.5 is the right default.

Tool use and agentic task handling. OpenAI's benchmarks show meaningful gains on multi-step tool use. For organisations running research pipelines, web-browsing tasks, or multi-tool orchestration, GPT-5.5 starts work productively with less up-front prompt engineering. For internal tool-use workflows, this is a real gain.

Lower friction for unstructured queries. GPT-5.5 needs less guidance on ambiguous tasks. For consumer-style, one-off knowledge work, this matters more than it does inside a structured production workflow.

Where Claude Opus 4.7 still earns its place

Long-form business writing and structured analysis. Claude's output on substantial business documents is better structured and tighter at volume. Board papers, compliance summaries, investment memos: this is where the quality gap is visible in side-by-side production testing, not just benchmark scores.

Claude Code and developer workflows. The Claude Code product surface is more mature than the GPT-5.5 equivalent, with tighter IDE integrations and more reliable agentic task handling for teams running custom builds. Australian development teams working on production Claude applications should not treat this as a side note. The rebuild cost is real.

Constrained production behaviour under compliance requirements. Claude's adherence to system prompt constraints and brand-voice guidelines is more predictable at scale. For Australian enterprises operating under APRA CPS 230, the Privacy Act (1988), or AUSTRAC-adjacent compliance requirements, that predictability is a production requirement. A model that occasionally deviates from a defined constraint is not a production model for a regulated entity.

That last point is underweighted in most model comparisons. When a production AI system outputs something that breaches a compliance constraint, the cost is not the output itself. The cost is the incident response, the regulatory notification process, and the trust repair with the business unit that was relying on it. Choose the model with the more predictable boundary behaviour, not the one with the highest aggregate benchmark score.

The question most teams are asking wrong

Most Australian mid-market businesses, 50 to 2,000 employees and $10M to $500M revenue, don't have a model problem. They have a production problem. Roughly 85% of AI pilots never reach production. The organisations forwarding the GPT-5.5 announcement internally are often still running a pilot that hasn't made it to a real workflow. The model ceiling is not where they're hitting the wall.

If your AI programme hasn't reached the point where model capability is the actual bottleneck, switching models won't fix that. It moves the work. The instinct to upgrade the model is often a displacement activity. It feels like progress. It is easier than connecting AI output to a real production system, a real data source, a real decision.

This is the part no vendor comparison will tell you.

How to decide workload by workload

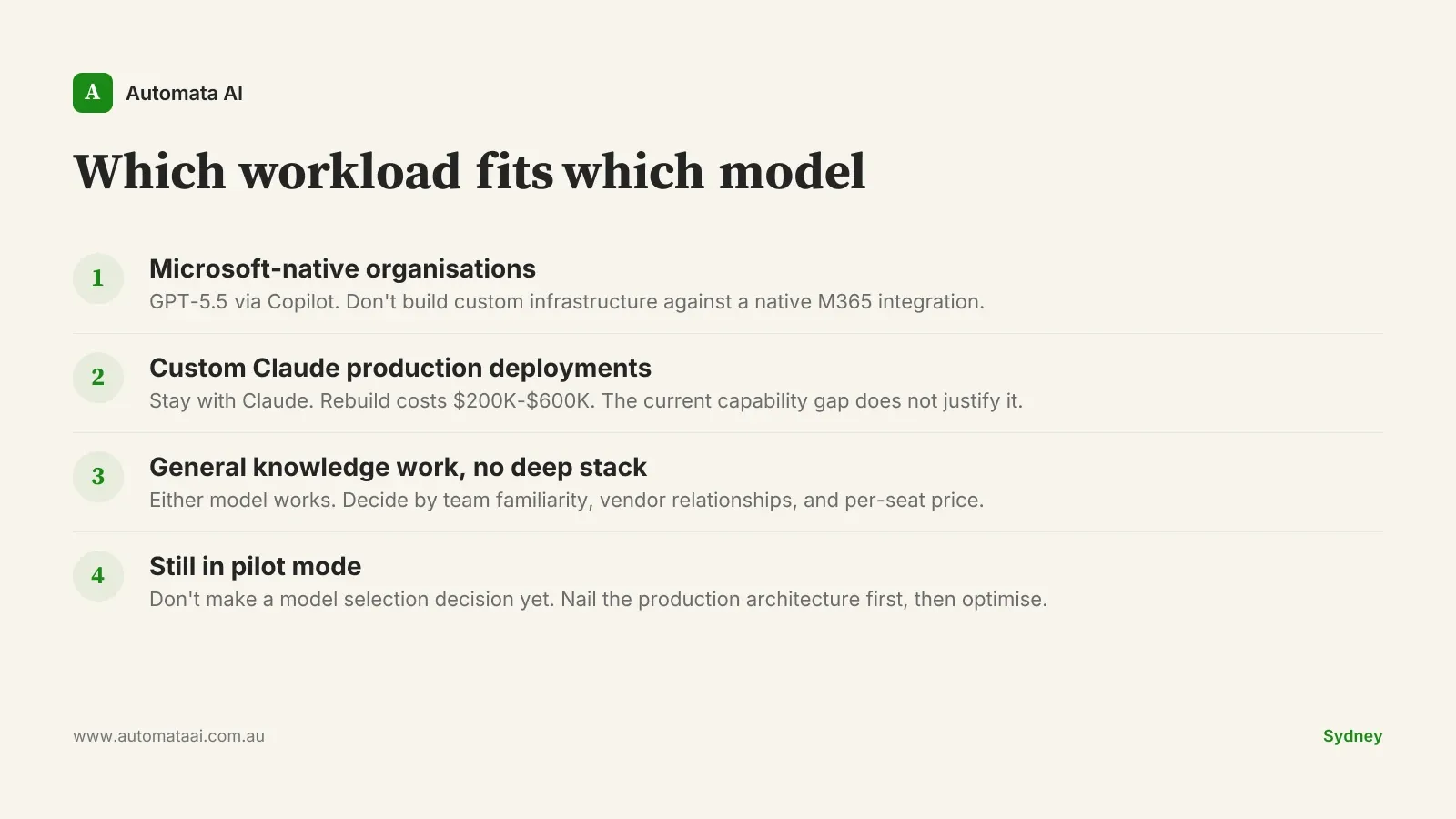

Microsoft-native organisations with a Copilot rollout. If your primary knowledge-work surface is M365 and you're running a broad Copilot deployment, GPT-5.5 is the natural default. The integration story is already there. Don't build custom infrastructure to work against a native fit.

Organisations with custom Claude production deployments. If you're running production agents built on Claude, including MCP toolchains, custom Skills, or system prompts wired to internal business systems, stay with Claude. Rebuilding a meaningful production deployment costs $200,000 to $600,000 in engineer time and integration work. The current capability gap does not justify that rebuild.

General knowledge work with no deep stack commitment. Either model works for the majority of general knowledge-work use cases. Pick based on team familiarity, existing vendor relationships, and per-seat pricing at your volume. At typical Australian mid-market enterprise commitments, price is rarely the swing factor. Fit is.

Organisations still in pilot mode. Don't make a model selection decision while still piloting. The question only becomes meaningful when you have a production workflow to benchmark against. Nail the deployment architecture first, then optimise the model.

The cost reality for Australian buyers

GPT-5.5 enterprise pricing tracks closely to Claude Opus 4.7 at mid-market volumes. Both OpenAI and Anthropic are pricing aggressively for Australian enterprise deals in 2026. The CFO case for switching does not hold: at typical Australian enterprise commitments of $60,000 to $180,000 per year, the pricing delta between models is negligible.

What does hold: integration effort, retraining time, prompt re-engineering, and time-to-value risk on migrating production workloads. An organisation migrating a three-workflow production deployment should budget 8 to 16 weeks of engineering time at $120 to $180 per hour, fully loaded. On a conservative estimate, that is $80,000 to $200,000 in migration cost, against a model that may not outperform the incumbent on your specific workloads.

Both OpenAI and Anthropic are shipping material model upgrades every three to four months. Enterprise-wide vendor commitment is less useful than workload-level model selection with a clear reassessment cadence. Pick the right model for each workload. Review the decision every two model cycles.

Pick three of your highest-stakes workloads. Run them head-to-head for four weeks using real production data and real quality criteria, not vendor benchmark scores. Measure what matters: output quality against your use case, error rate in production, integration friction. That evaluation costs close to nothing. The migration is a different number entirely.