Your Claude app has been running for six hours. The bill is climbing. You check the logs and find the app looping through a state it should have exited two hours ago, generating thousands of tokens on work that will never reach the final output.

That's a harness problem. Not a model problem.

Why the harness is the actual application

In a long-running Claude app, the model does the work. But the harness is what shapes that work: the state management, the output validation, the logging, the budget enforcement. Every production failure I've seen in Sydney mid-market Claude apps traces back to a harness decision, not a model capability. The model did exactly what it was given. The harness gave it the wrong thing to do.

The harness is where your application's definition of 'done' lives. If that definition is vague, the model will improvise. Claude is very good at improvising. That's the problem.

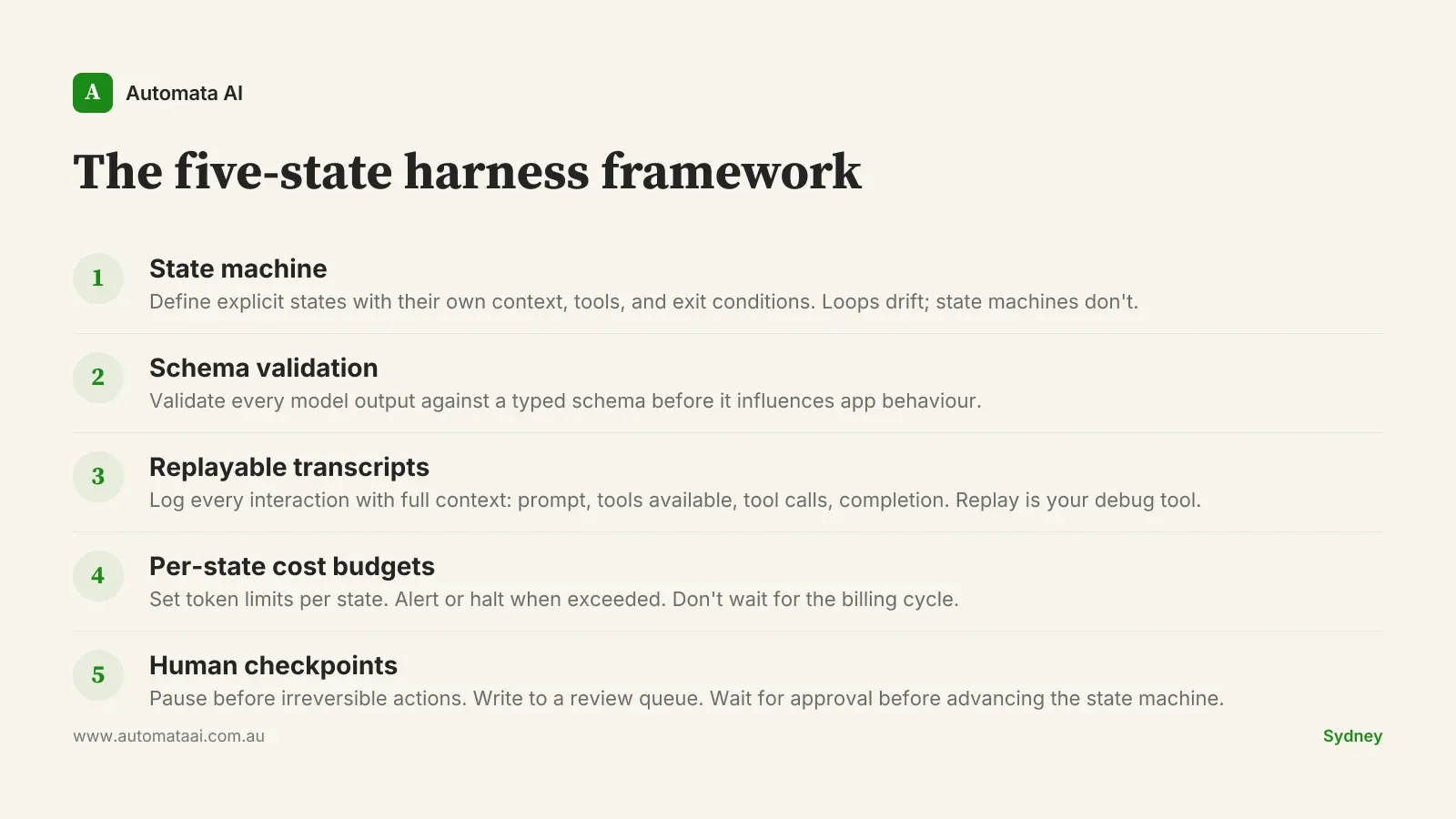

The five-state harness framework

These five design choices compound. Each one is individually useful. Together they're the difference between an app you can leave running overnight and one that requires babysitting.

1. State machine over free-form loops

Define the explicit states your app moves through. Extraction, validation, enrichment, output, done. Give each state its own context window, tool access, and exit conditions. A document processing pipeline shouldn't have the extraction state accessing the same tools as the output state. Free-form loops drift because there's no authoritative answer to 'are we done yet'. State machines stay coherent because the answer is always one of two things: advance to the next state, or stay here with a reason.

2. Strong typing on agent outputs

Use schemas to validate every model output before it influences application behaviour. Zod in TypeScript, Pydantic in Python, JSON Schema for language-agnostic pipelines. The failure mode without schema validation is silent: the model returns something structurally plausible but semantically wrong, the app proceeds on bad data, and you discover the error three steps later when something downstream breaks in a way that doesn't point back to the origin.

3. Replayable transcripts

Store every model interaction with enough context to replay it in isolation: the full prompt, the tools available, the tool calls made, and the completion. When something goes wrong at production scale, replay is the difference between a ten-minute diagnosis and a four-hour debug. Australian teams should structure transcript logging to separate operational replay data from personal information the app processes. The Privacy Act 1988 applies to that data whether it's in a log file or a database.

4. Per-state cost budgets

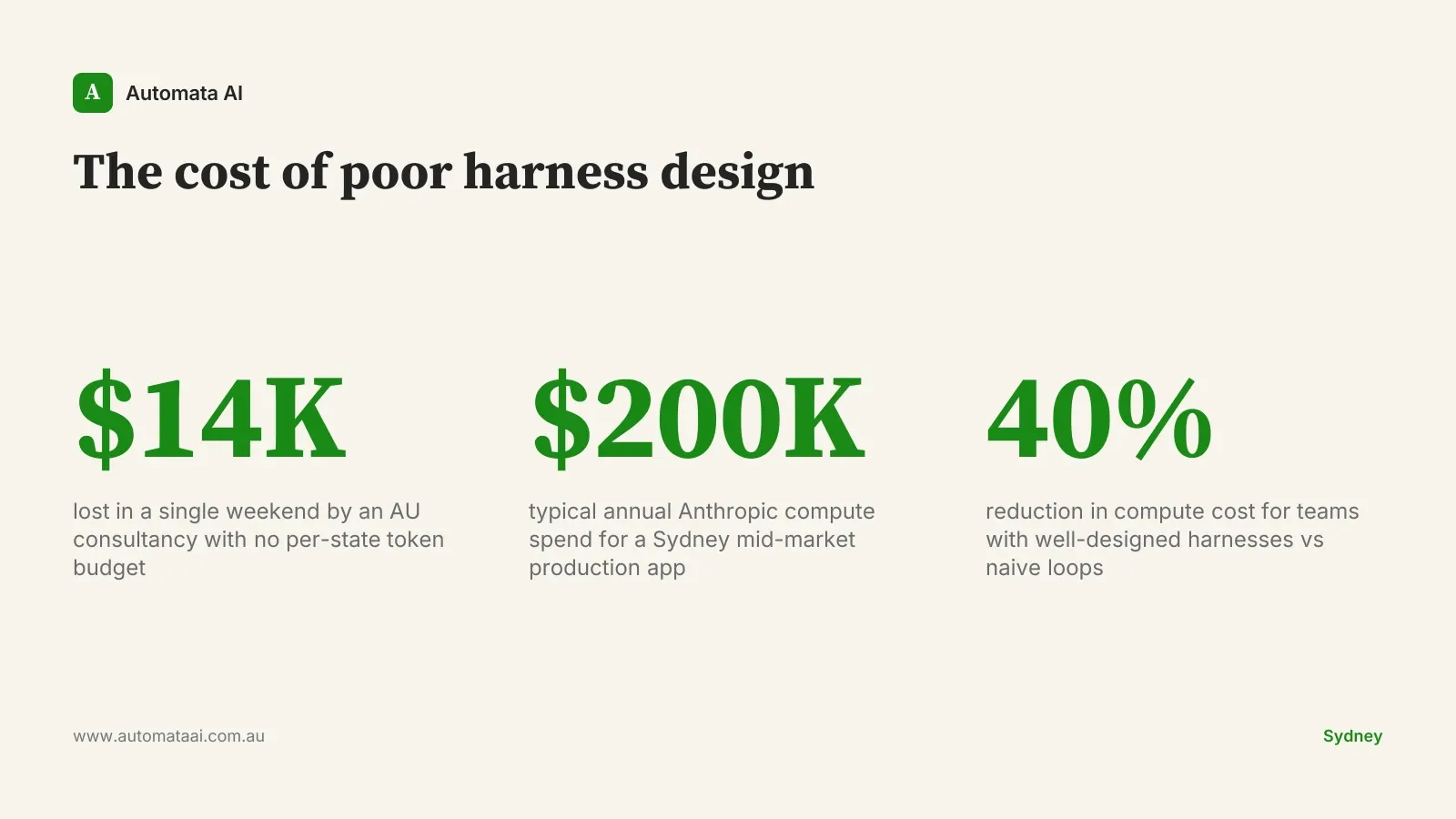

Set a maximum token budget for each state and alert or halt when the budget is exceeded. Without this, a single misbehaving agent can run unsupervised until the billing cycle closes. An Australian consultancy lost $14,000 in a single weekend last year to a runaway extraction loop nobody noticed until Monday morning. Per-state budgets wired to a Slack alert would have caught it within the hour. You can model the token and cost exposure for your own app in our ROI Calculator.

5. Human-in-the-loop checkpoints

For states that take irreversible actions (sending communications, updating records, triggering payments), require a human approval step before the agent advances. Not every action needs this. High-stakes, low-frequency, hard-to-reverse actions do. The engineering is straightforward: pause the state machine, write a message to a review queue, and wait for an approval signal before proceeding.

These five patterns can be implemented incrementally. You don't need all five before going to production. The sequence that works: state machine first, schema validation second, transcript logging third, per-state budgets fourth, human checkpoints fifth. Each one builds on the previous.

What this costs when you get it wrong

A typical Sydney mid-market production Claude app spends between $40,000 and $200,000 per year on Anthropic compute. Harness design choices are a material driver of that number. Most teams don't see it labelled that way. They see it as unexpectedly high monthly bills, inconsistent output quality, and debugging hours that trace back to architectural decisions made in the first week of development. The connection between architectural choices and bill size is often invisible until it isn't.

Teams with well-designed harnesses consistently spend 20 to 40 percent less than peers shipping the same functionality with naive loops. Not because they're running fewer tokens. Because they're not running the same tokens twice, and they're not paying for completion work that gets discarded when a state exits incorrectly.

When not to design for all five

This design adds complexity. That complexity earns its place when your app runs longer than a single user session, takes actions in external systems, or processes enough volume that a silent failure compounds before anyone notices. If none of those apply, simpler is better.

Not every Claude app needs a state machine. A one-shot summarisation pipeline that runs for thirty seconds, returns a result, and terminates doesn't need budget enforcement or replay infrastructure. Over-engineering for reliability you don't need is its own kind of failure.

The app runs unsupervised. Errors compound without human monitoring in real time.

Actions are irreversible. Emails sent, records updated, payments triggered. Rollback is expensive or impossible.

Output variance is expensive. When a wrong answer costs more than a late answer.

Volume is high enough to surface edge cases. At scale, edge cases become regular cases.

Where to start this fortnight

If you have a long-running Claude app in production, map the state machine first. Even if it's currently a free-form loop, writing out the states on a whiteboard will reveal exit conditions you haven't defined yet. Our AI Readiness Assessment maps your highest-stakes app and identifies which of the five harness patterns apply.

Map your state machine explicitly. Draw every state, every transition, and every exit condition. If it takes more than one whiteboard, the state machine is telling you something.

Add schema validation to every output that influences app behaviour. Every output, not just the ones you think are risky. The risky ones are usually the ones you haven't thought of yet.

Enable replayable transcript logging before anything goes wrong. Separate PII from operational replay data from the start. Retrofitting this after an incident is a bad time to learn about Privacy Act obligations.

Set per-state token budgets and connect them to monitoring. Before the next billing cycle, not after the next surprise invoice.

The teams getting long-running Claude apps right aren't necessarily using better models. They're using better harnesses. Automata AI's AI Automation Services include harness design as standard: state-machine patterns, schema validation, transcript logging, and budget enforcement. If your Claude app drifts, spirals, or produces surprise bills, that's where to start.