You pulled the CDR data. Six months of compliance work to get accredited, a new data pipeline to maintain, and ongoing consent management. Now you have 90 days of transaction history on every applicant, and your credit officers are still reading it like a bank statement.

That is the CDR gap most non-bank lenders are not talking about. The data is there. The pipeline works. The categorisation still runs on rules written before the gig economy existed, before buy-now-pay-later became a meaningful liability signal, and before casual income became the default employment structure for a material share of borrowers under 40.

What rule-based engines still miss

Rule-based transaction categorisation works well when income is clean. A salary from a named employer, appearing fortnightly, amounts consistent. That describes fewer Australian borrowers every year. The share of applicants with non-standard income patterns, whether from multiple casual employers, platforms, or a combination, has grown substantially over the past three years. The rules that worked in 2019 are generating false negatives on creditworthy borrowers in 2026.

Take a casual nurse doing four shifts a week at a private hospital group, driving rideshare three evenings, and picking up irregular hospitality work on weekends. That person has income. Legacy rules flag the pattern as unstable. A Claude agent reads the 90-day CDR history and returns a structured summary: primary income $4,800 per month, secondary income $1,400 per month irregular, no payday lender activity, two small gambling deposits totalling $80, no buy-now-pay-later stacking.

That summary takes around 90 seconds to generate per applicant.

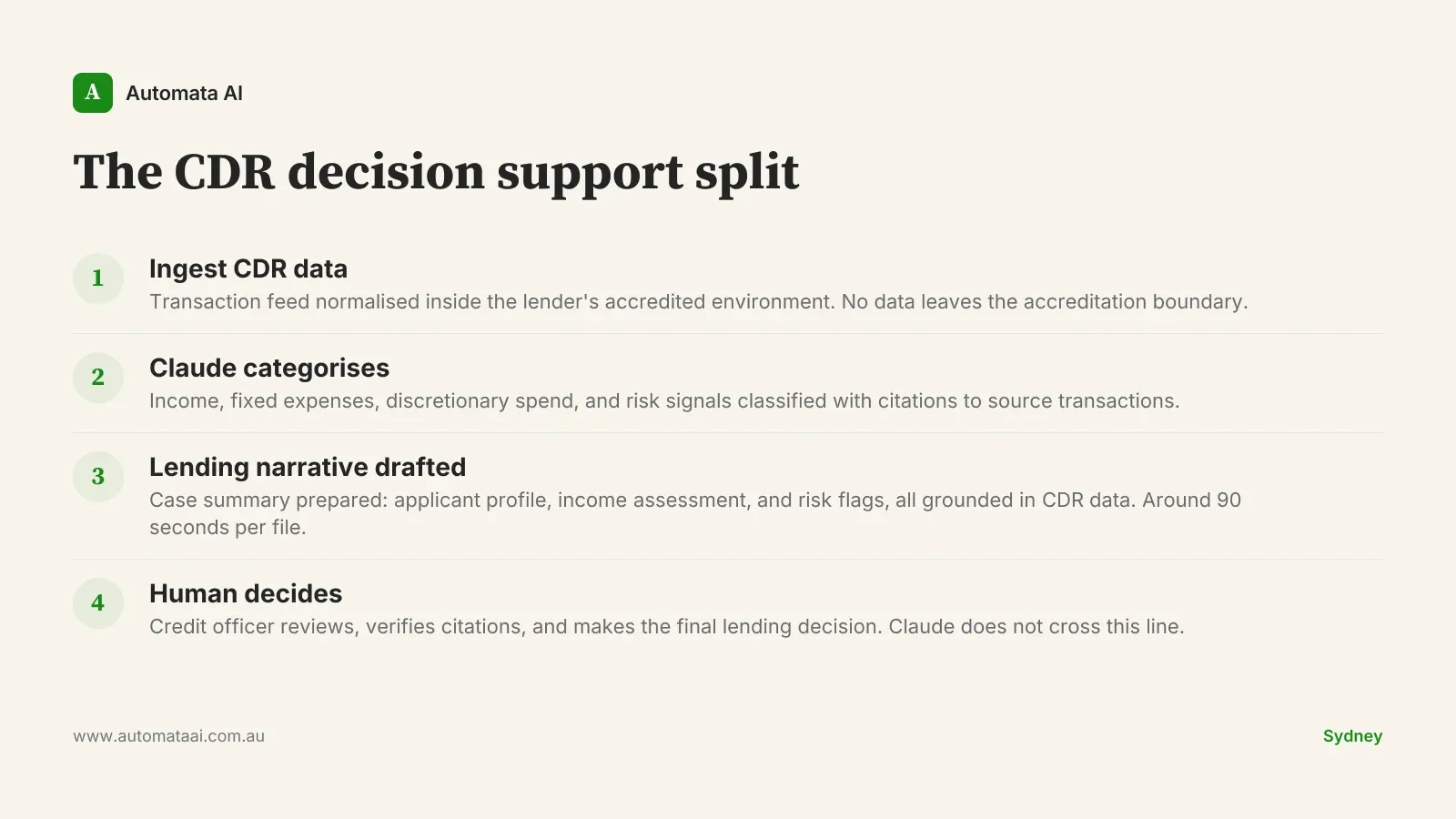

What Claude does in a CDR workflow

Transaction categorisation. Each transaction classified into income, fixed expense, discretionary spend, or risk signal. Unlike rule-based systems, Claude returns explanations the credit officer can read, query, and override.

Employment pattern identification. Payroll deposits read to determine employment type, frequency, and income stability across casual, gig, and multi-employer income streams.

Risk signal flagging. Gambling spend, payday lending activity, and buy-now-pay-later stacking identified, each with citations back to the specific transactions in the CDR feed.

Lending narrative drafting. A case summary written for the credit officer: applicant profile, income assessment, and risk flags, all grounded in CDR data.

The numbers from a 16-week pilot

A non-bank Australian lender processing around 45,000 home loan applications per year ran a Claude-backed CDR workflow for 16 weeks before moving to full production. The outcome: 7,800 credit officer hours recovered in the pilot period alone. At fully loaded Australian analyst rates of $120-$160 per hour, that is around $1.2 million in recovered capacity. Daily application throughput grew by 35 percent without adding a single headcount. The compliance audit score improved across the same period.

Those numbers hold because the workflow is narrow. Claude prepares the case. The human approves it. The payback maths improves further once you account for reduced error rates in the categorical output and reduced back-and-forth between credit officers and applicants. If you want to model the payback for your own application volume, the ROI Calculator runs the numbers against AUD analyst rates in about three minutes.

The compliance frame you cannot skip

CDR data sits within a regulatory structure most lenders treat as friction. It is actually a guide. Under the Privacy Act (1988) and the Consumer Data Right rules, Claude processing must happen inside the lender's accredited data environment. Prompts and model outputs get logged. Customer consent must explicitly cover automated decision support, not just general data use, and not just a buried clause in a PDS, but a named, specific use case in the consent flow. The Australian Privacy Principles set that floor.

The OAIC's Explanatory Guidelines are clear: the credit decision stays with a qualified human. That is not just compliance posture. It is what makes a declined application defensible when the customer asks why.

That is also what makes the prompt design non-trivial. Prompts that return categorisations without transaction-level citations are not compliant with this framework, regardless of their accuracy. If a credit officer cannot trace a CDR risk flag back to its source transaction, the flag has no evidentiary weight in a lending decision. Building the citation layer into the workflow from day one is not optional.

When to leave this on the shelf

A 16-week pilot with clean numbers makes CDR automation look straightforward. It is not, in two specific situations.

Low application volume. Under 3,000 applications per year, the setup cost, including accreditation maintenance, integration, prompt engineering, and logging infrastructure, does not pay back inside 12 months. The maths does not work.

Teams treating Claude as a decision engine. The moment a credit officer is expected to approve what Claude returns without independent review, the workflow crosses into automated decision-making under the Privacy Act. That is a different regulatory posture, a longer approval timeline, and real legal exposure.

The lenders who get this right make the split explicit from day one. Claude prepares, the human decides. Every Claude output carries citations back to the specific CDR transactions it drew on, and the reviewer can verify any claim in under two minutes. That traceability is what keeps Australian financial services deployments on the right side of the OAIC when audit time comes. It is also what makes the credit officer's override meaningful rather than performative.

The rollout sequence that sticks

Australian lenders that big-bang a CDR workflow rollout across their full credit team see adoption below 25 percent. The process feels foreign to credit officers who did not help shape it, overrides accumulate without a structured feedback loop, and the early outputs are worse than they should be because the prompt calibration needed real-world edit data that was never collected.

The providers hitting adoption above 70 percent run a different sequence. Six to eight weeks with one team or one credit product. Measure draft quality, edit volume, and reviewer satisfaction. Move to broader use only after those numbers improve. The override patterns from those early weeks show exactly where the prompts need work. That calibration investment is what makes the outputs sharper for the teams that follow.

There is a harder-to-model benefit that shows up around month four: reduced staff turnover. Credit officers in teams using Claude well are not spending the first three hours of every morning categorising transactions from scratch. The work becomes less draining. For a team of eight analysts, that is worth approximately $90,000 per year in reduced replacement cost. Hard to model at the outset. Material once the rollout settles.

The CDR data is already there. Accreditation is done. The categorisation problem has a clean solution. The remaining question is whether your credit officers are spending their hours on analysis or still working through raw transaction feeds. Our AI Automation Services covers the full integration scope for lenders who want to see what a production-grade CDR pipeline looks like in practice. Start with one team, keep the human in the decision seat, and the numbers follow.