The headline says OpenAI's Stargate has scaled past 3GW of compute capacity, ahead of the original 10GW-by-2029 target and months before most analysts expected. The compute arms race between frontier AI labs is real, it's accelerating, and most AI strategy briefings from 2024 are already out of date.

For Australian enterprises mid-way through a multi-year AI vendor review, or approaching a renewal on an existing commitment, this matters in ways the press coverage doesn't capture. A business sizing a three-year AI contract in the $300,000 to $1.2M range is buying more than capability today. It's betting on how a vendor's compute posture holds up over the contract term.

Compute scale matters, and not just for benchmarks

The AI benchmark press focuses almost entirely on model capability. Compute scale shows up differently in commercial terms.

Capacity availability. A vendor with structurally larger compute absorbs more production demand without rate-limiting customers. AU enterprises running document generation, analysis, or customer-facing inference at volume need this assurance in their contracts, not just in vendor marketing.

Pricing trajectory. When compute supply expands faster than demand, there is room for price reductions over a multi-year term. When supply lags demand, pricing pressure moves the other direction. Both have happened in the past 24 months depending on tier and region.

Roadmap velocity. More compute means more model training experiments, faster iteration cycles, more features shipped. The capability gap between labs with compute and labs without compounds over an 18-month contract period.

OpenAI, Anthropic, Google: three honest reads

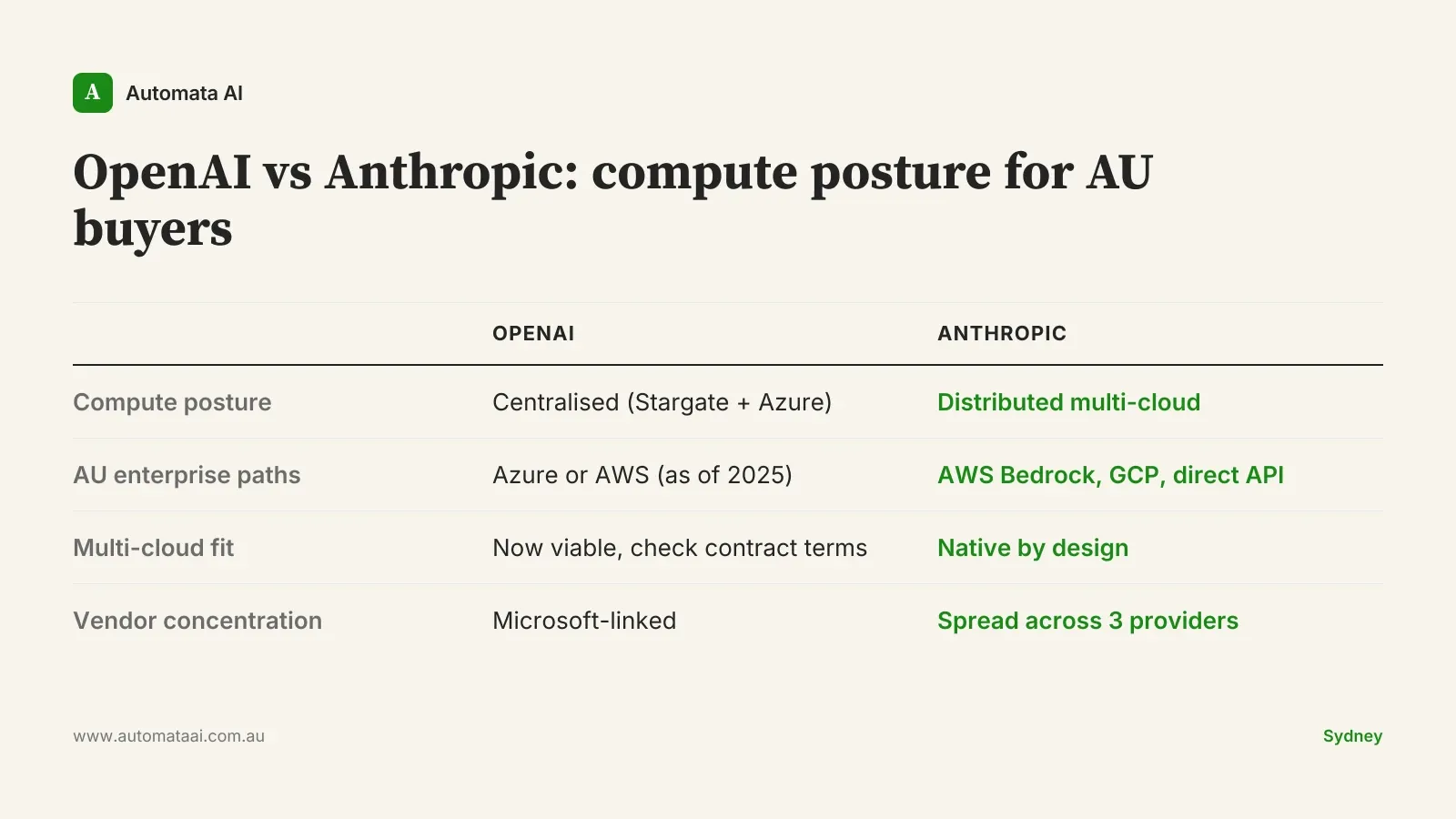

OpenAI Stargate is the most aggressive scale-up of the three. 3GW of capacity today, with a 10GW target by 2029. Being ahead of schedule matters commercially: more supply relative to demand creates pricing room, and AU enterprises with multi-year contracts should factor that trajectory into renewal negotiations. The historical Microsoft alignment meant AU enterprises needed Azure or Microsoft 365 stack access to get the best commercial terms. That changed recently. OpenAI's AWS availability is now live, opening a different procurement path for organisations that aren't Microsoft-primary.

Anthropic is multi-cloud by design. Compute is distributed across AWS, Google Cloud, and Broadcom-anchored deployments rather than concentrated in a single hyperscaler relationship. For APRA-regulated financial services firms in Sydney with multi-cloud procurement requirements embedded in their operating model, this architecture difference is not abstract. It shows up in vendor risk assessments and sometimes directly in board-level technology governance requirements. The practical read: you can run Claude on AWS Bedrock, Google Vertex, or directly through the Anthropic API, and switching between those paths doesn't require a new commercial agreement.

Google has the deepest vertical integration of the three. TPU development, cloud infrastructure, and model training are all in-house. It's the most architecturally distinct of the frontier labs, which is both an advantage (no single external infrastructure dependency) and a consideration: you're more tightly coupled to Google Cloud for full capability access than with the other two.

None of these three vendors are currently compute-constrained in a way that should block commitment for a mid-market AU enterprise. The differences are in posture and resilience, not absolute capacity. The question is which posture fits your risk register.

The AU-specific angle most vendor briefings skip

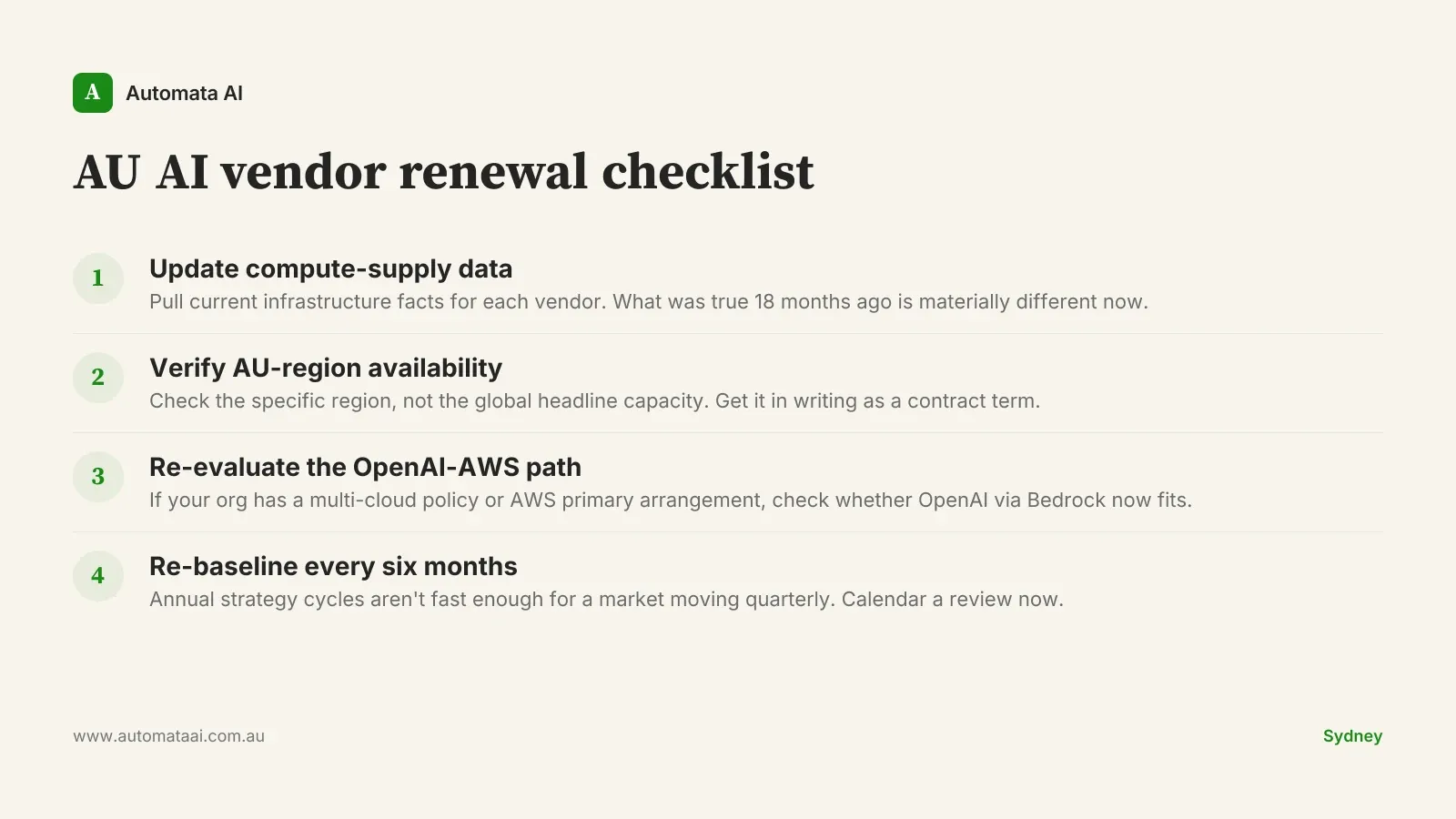

Most of Stargate's 3GW is US-based infrastructure. The question AU enterprises should be asking is not compute capacity in aggregate; it's compute capacity in ap-southeast-2, or whichever region satisfies your data residency requirements. Three gigawatts of capacity in Virginia does not solve a Privacy Act compliance requirement in Sydney.

AWS Bedrock's Sydney region has been available to AU enterprises for longer than most headline compute stories acknowledge. Latency is materially different from routing inference through US-based infrastructure, and for workloads processing sensitive customer data, the routing path itself is the compliance question, not just storage location. For financial services firms operating under APRA CPS 230, data sovereignty is not a preference; it's a control requirement that needs to be specified in vendor contracts, not assumed from global capacity headlines. Get a written answer from your vendor on AU-region availability before you sign, and verify it covers inference routing, not just data storage.

The OpenAI-on-AWS development is the most significant procurement shift of the last six months for AU enterprise buyers. Organisations with AWS primary arrangements or multi-cloud procurement policies were previously looking at Azure as the only supported OpenAI path. That constraint has lifted. If your organisation has a multi-cloud requirement, your vendor shortlist just changed.

When the compute arms race doesn't change your decision

If your total AI spend is under $100,000 per year, the compute supply story is background noise. A professional services firm in Melbourne running two or three AI assistants for document drafting and client research at $40,000 annually is not going to hit rate limits on any of the major frontier labs. The compute arms race is relevant when you're running production workloads at volume, doing real-time inference at scale, or entering a contract term above $300K.

More honestly: if you haven't got your first use case to production yet, the compute arms race is not the bottleneck. The businesses failing at AI right now aren't being stopped by a GPU shortage. They're being stopped by integration complexity, data quality problems, and internal ownership gaps. Optimising your vendor compute posture before solving those is the wrong order of operations.

Total spend under $100K/year. At this scale, capacity risk at any major frontier lab is negligible.

Still in pilot or proof-of-concept mode. Compute arms race analysis is for production procurement decisions, not sandboxes.

Batch workloads without real-time SLA requirements. Rate-limiting matters less when you're processing async jobs overnight.

Before your next AI vendor renewal

If your AI commitment is above $200,000 and coming up for renewal in the next 12 months, the checklist above covers the key variables. A note on the less obvious ones: AU-region availability is the single most important item for any regulated sector, and the correct question is whether inference traffic is routed within Australian borders, not just whether data is stored there. On re-baselining frequency, six months is not a round number chosen for convenience; it's roughly aligned with the cadence at which frontier labs are making commercially significant infrastructure announcements.

The compute arms race will keep running. Prices will come down, capabilities will improve, and new infrastructure deals will get announced. The businesses that benefit most aren't the ones tracking every press release. They're the ones who negotiated contracts that give them flexibility when the landscape moves.