Most cost models for Claude rollouts miss at least three of the four cost lines. The one they do capture, direct API token spend, is also the only one that Anthropic's pricing page helps you estimate.

This is the problem Sydney CFOs keep running into when sizing a Claude rollout. The vendor deck number is real. The production number is 1.5x to 2.5x higher.

The four-cost-line model

The total cost of an internal Claude deployment has four distinct lines. Most models that reach a CFO's desk include only the first. Name all four upfront or your finance team will be chasing variance from month four.

Direct API token spend. Input plus output tokens, net of prompt caching. This is what most models include, and where most models stop.

Tooling and infrastructure. MCPs, observability pipelines, eval frameworks, and the hosting that runs them.

Internal engineering and ops. The developers and ops staff maintaining the rollout week to week, at a blended $120 per hour fully loaded.

Per-seat licensing. Claude.ai and Claude Code subscriptions for employees using hosted products.

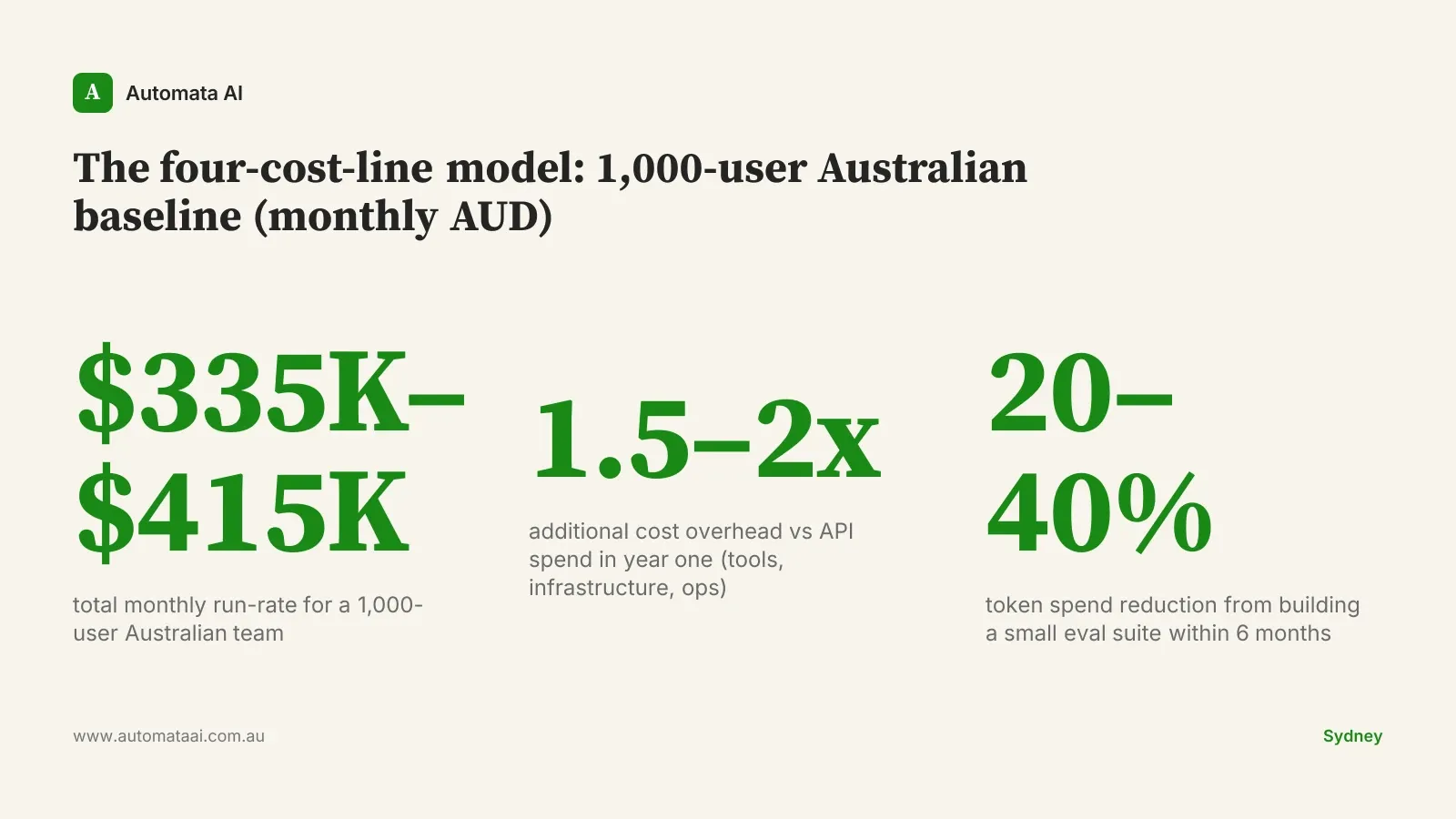

The last three lines typically run 1.5x to 2x the API spend in year one, then settle to 0.5x to 1x in steady state as tooling stabilises and the team stops rebuilding the same infrastructure. That gap between what the vendor deck shows and what the bank statement shows is where budgets break. It also means the business case should factor in a year-one cost premium and model year-two economics separately.

A worked example at 1,000 seats

A 1,000-user Australian mid-market team in 2026 running Claude across chat, code, and a handful of agent pipelines typically lands around here after the first month of settled usage:

Average of 8 million input tokens plus 1.5 million output tokens per active user per day across chat and code.

60 percent prompt caching hit rate after the first month of optimisation.

40 percent of seats on Claude.ai or Claude Code subscriptions, the rest on metered API access through internal tools.

That profile produces a steady-state monthly API bill in the $180,000 to $260,000 AUD range. The spread is mostly driven by how aggressively the team uses agent and code workloads. A team heavy on chat sits toward the lower end. A team running multiple long-horizon agent pipelines sits toward the upper end.

Subscriptions at around $90,000 per month for the 400 hosted-product seats.

Infrastructure and tooling at around $25,000 per month.

Engineering and ops at around $40,000 per month.

Total monthly run-rate sits between $335,000 and $415,000 AUD for a team this size. If you want to model your own numbers before building the business case, run them through our ROI Calculator first.

Where the variance comes from

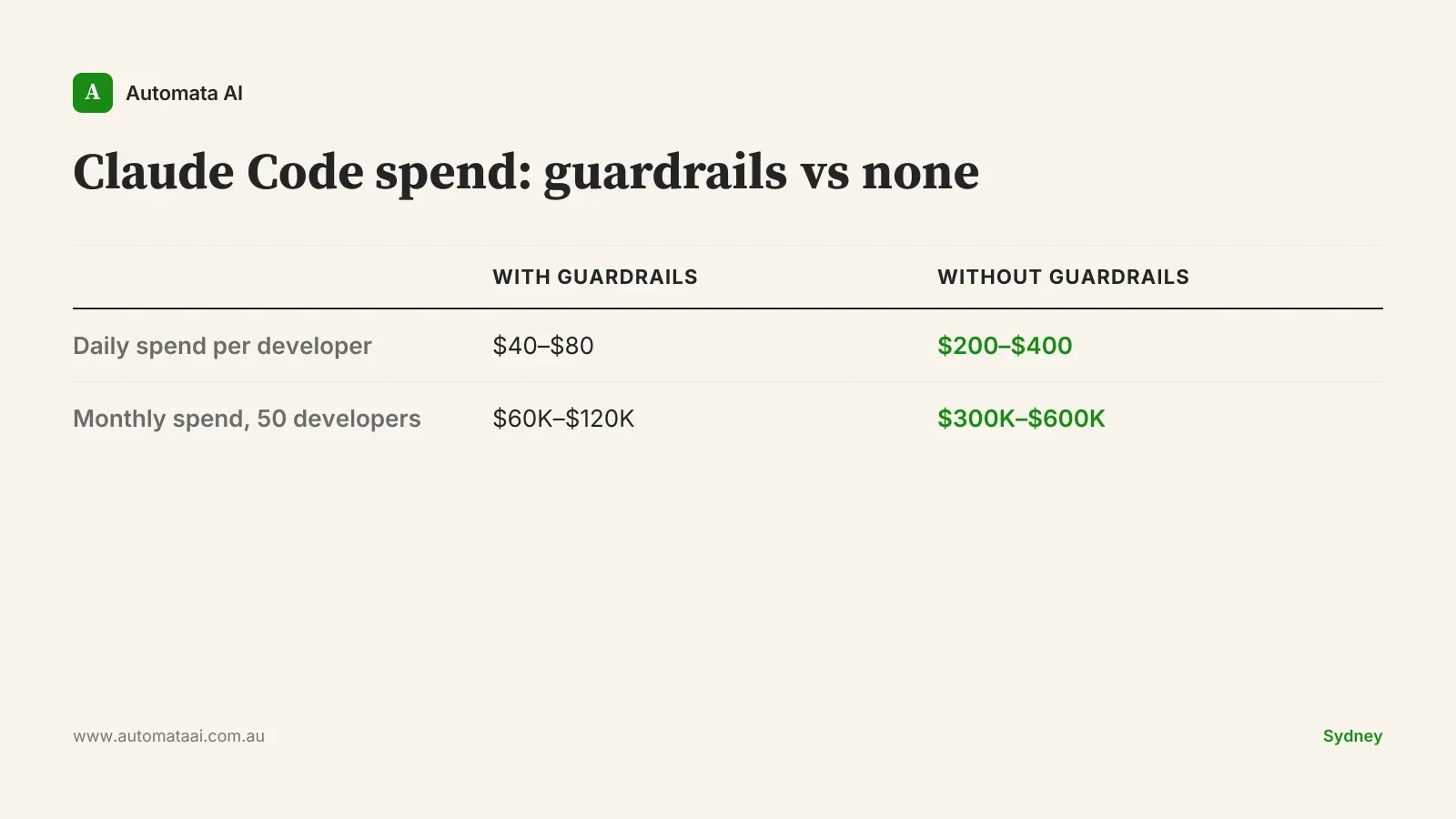

The biggest swing factor is the agent and code workload, not the chat workload. A development team running Claude Code with prompt caching enabled and a tool-use limit enforced per session keeps daily spend at $40 to $80 per developer. The same team without those guardrails can reach $200 to $400 per developer per day. For a 50-person development team, that is the difference between $60,000 and $600,000 AUD per month in code-related API spend alone.

The second factor is whether the team builds an eval suite. Teams that invest in one, even a small internal suite with 50 to 100 test cases, cut total token spend by 20 to 40 percent within six months. The reason is that an eval suite tells you which tasks actually need Opus and which ones Haiku handles just as well. Teams that skip the eval work tend to default every request to the most capable model, because nobody has built the feedback loop to challenge that default. It is not a technical failure. It is a governance gap.

The third factor is exchange rate. Claude is billed in USD. A 5-cent move in the AUD/USD rate shifts a $250,000 USD monthly bill by roughly $20,000 AUD. Over 12 months, that is up to $240,000 AUD of unhedged FX exposure on a single vendor. Most Sydney finance teams managing other USD SaaS contracts already have a view on this, but the size of the Claude API bill often catches them off guard.

When this model doesn't fit

If your total projected AI spend is under $50,000 AUD per year, the four-cost-line model is overkill. A per-seat estimate with a 30 percent variance buffer gets you close enough. The cost of building a more granular model at that scale is not justified by the precision it buys you. This is not a small-business caveat. Plenty of large organisations start with a narrow Claude deployment that does not warrant the full four-line analysis.

The rollout is a single use case. One workflow, 30 users, and a usage cap covers the governance. Skip the full model.

The team building it is also running it. Engineering cost gets underestimated by default when the same people build and operate. Fix the resourcing first.

The payback horizon is over 18 months. If the total four-line cost does not justify itself within 18 months, the process being automated is probably not the right starting point.

Contract levers a Sydney finance team can pull

Once the four-line model is clear, there are four levers that reduce exposure before you lock in a budget or a prepay commit.

Annual prepay commit. Anthropic offers 5 to 12 percent discounts on committed volume. At $250,000 USD per month, a 5 percent discount returns $150,000 USD annually.

USD treasury hedge. Treat the projected annual API spend as a USD liability. Finance teams managing other USD vendor contracts already have the playbook.

Per-team budget caps with monthly reporting. Surface variance to finance early, not at quarter-end. Agent workloads are where overruns hide.

A tiered model strategy. Default Haiku for high-volume, lower-stakes tasks. Reserve Opus for the work that needs it. Our AI Automation Services team typically reduces client API spend by 25 to 35 percent in the first three months through model tiering alone.

A defensible AUD model is not a one-off exercise. Token spend is a living number, and a 1,000-user team's usage pattern shifts meaningfully between month one and month twelve as employees build new habits and agent pipelines multiply. Build the governance to match, review the model quarterly, and identify which workloads belong in which tier before locking in a prepay commit. Our AI Readiness Assessment helps you map the workload profile before the budget conversation.